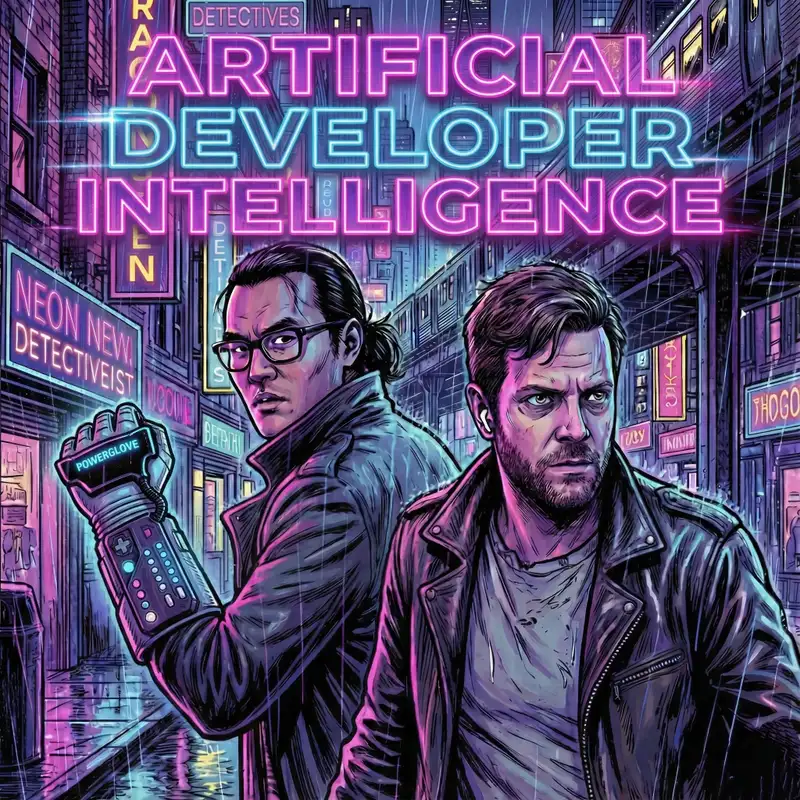

Episode 12: The OpenClaw Saga, How AI Affects Programming Skills, and How Vibe Coding is Addictive like Gambling

Shimin (00:16)

Hello and welcome to Artificial Developer Intelligence, a podcast where two software engineers chat about the ever-changing landscape of AI-enabled programming. We are still America's number one podcast when it comes to generating training data for future AI historians. I am Shimin Zhang and with me today is my co-host, Dan. He is in his dark flow era, Lasky. Dan, how are you doing today?

Dan (00:31)

You

I would have worn a slightly darker hoodie if I knew that I was in my dark flow era. you know, we'll coordinate it better next time. That's probably true.

Shimin (00:47)

feel like you've always been in your dark flow era. ⁓

All right, on today's podcast, as per usual, we are starting off with the news thread mill where we have a couple of articles about Anthropic and their AI constitution or related to it, my favorite topic, along with a new ⁓ open AI product, Prism.

Dan (01:04)

Your favorite topic.

And then we'll be going into the tool shed where we actually have a pretty big variety of tools today. I'm going to spoiler it a little bit and say a lot of them have to do with Claude bot. But yeah, we also will be talking about open coding, the new Trinity large model and a couple other Claude bot related things. Or I guess now it's open claw, excuse me.

Shimin (01:34)

you forgot MoltBot, the transitional name that lasted all 24 hours.

Dan (01:37)

Yeah.

Exactly.

Shimin (01:41)

Yeah,

after that we have post-processing where we have an article ⁓ titled, My Five Stages of AI Grief.

Dan (01:48)

which is not trolley at all. And then we're actually going to be doing a little bit of a deep dive. I would say it's like, not like a deep ocean dive, but like you've kind of gone, we don't need full, we need scuba gear, but we don't need like those crazy helmets for like the serious pressure on this one. So, it's how AI assistance impacts the formation of coding skills, which is as, know, as per usual, just reading stuff off the Anthropic blog because, Rahul isn't here today.

and then the second one we're going to talk about is, breaking the spell of vibe coding.

Shimin (02:17)

Right, and no Dan's rent segment this week, but as always, we are going to have two minutes to midnight where we have a couple of topics about where we are in the ⁓ AI bubble, if there is a bubble.

Dan (02:21)

You

Shimin (02:28)

So to get us started, first up we have an article from Ars Technica titled, does Anthropic believe its AI is conscious or is that just what it wants Claude to think? Dan, why don't you?

Dan (02:42)

So it's really,

it's about your favorite topic, which is Claude's Constitution document. ⁓

It was interesting because they interviewed a couple of folks for the article that ⁓ aren't actually, I guess, Anthropic refused to comment on it. So they needed to get some other folks. So they pulled in ⁓ Simon Willison and ⁓ he made a few comments about like, I'm very confused about Claude's moral humanhood stuff. ⁓ And so

Shimin (03:03)

Hahaha

Mm-hmm.

Dan (03:19)

His point was that he believes that they did in fact train it in. So I guess there was some question about that earlier on in the article. And but he still doesn't really get like why they're doing it. And then there's a whole bunch of stuff where they like sort of talk about the evolution of the soul document that I found really fascinating because apparently when it started, it was originally just kind of like guardrails, right? Similar to like what you've seen for a lot of the

Shimin (03:40)

Right.

Dan (03:44)

alignment stuff, right? We're like, always respond with the best of intentions. Don't tell people how to make explosives. Like, ⁓ try not to lie to users, et cetera. And ⁓ now it's evolved into this 10,000 token. think it's 30,000 line, like set of giant guidelines that's trained directly into the weights instead of being injected as a system prompts.

The other thing I thought was interesting as they talked about like they not only have a philosophy PhD who works on alignment there and contributed to the soul document ⁓ but they also have it reviewed by a board of people that included two clergy members. So it really kind of, you know, I guess from the perspective of a quote unquote soul document that makes sense.

Shimin (04:28)

Mm-hmm. Yep, like.

Dan (04:35)

⁓ What else did I take away from this that was just fascinating? I don't get into the philosophy side as much as Shimin does, ⁓ I just couldn't leave this be because they went into a lot more detail than some of previous stuff that I'd seen on it.

Shimin (04:52)

Yeah, my takeaway from this article was kind of the commercial aspect of it, to move away from the philosophical aspect for a second. They spent a lot time talking about if the AI has agency, then anthropic may not be on the hook as much for any kind of negative things that ⁓ that Claude does. So

Dan (05:16)

Hmm.

Shimin (05:18)

That's a facet that I've not considered before. ⁓

So then this idea of, when Claude does something bad, Anthropi can be like, we tried to do our best, but little Johnny's, there's always a bad seed. You couldn't train that bad behavior out of it. ⁓ Then they're able to kind of get away with a lot more. So in my mind, it's always interesting to follow, you know, what Anthropi actually does when money is involved, as opposed to

Dan (05:48)

Mm-hmm.

Shimin (05:50)

you know, just the soul document itself, because like we were talking about previously, the soul document is, it's good marketing. And I, I maybe believe I buy into it a little more than you do, but you know, at the end of the day, if Anthropic is still, you know, working with Palantir and if they were providing Palantir with a model with all the guardrails off, then that's a very different story that we're talking about here.

Dan (06:17)

it is interesting too, where it's like how much of this is sort of aspirational, right? Like we aren't there yet, but maybe we're getting close enough that they feel like it's necessary, ⁓ to kind of do some of these things too. So, yeah, it's part of the fascination of the space. I think, right. Is there's like the financial aspect that we try to cover on this a little bit. There's the very real.

⁓ impact to software developers jobs at least and possibly others and then ⁓ There's this like, you know, are we actually getting to a point where there's gonna be these like sort of silicone creatures floating around You know doesn't feel like it sometimes but other times you get little glimpses of it. It's like whoa Maybe I read too much

Shimin (07:01)

It's a cyberpunk Dan's favorite topic. Though it turned out to be really your least favorite topic. So we'll see. Do you have anything else we want to add to this?

Dan (07:11)

the only other thing that I found kind of odd was the focus on like suffering in that they talked about like within anthropic themselves. So I guess there's folks that anthropic that have gone on the record saying that, ⁓ it, it should be, first of all, they've hired a, model wellness person. Like it's actually someone on staff whose title is like model wellness.

I don't know if that makes them like a AI therapist or what, but, ⁓ it was kind of interesting. And then the second piece was like, there's someone else had gone on the record saying that like, should we give models the ability to like bail out on a task that they don't want to do because they find it onerous for whatever reason. And like the immediate thing that popped in my head as soon as I read that was like the sort of Cory doctor. ⁓

Shimin (07:53)

Mm.

Dan (08:03)

Article that we read a couple weeks. It was it two weeks ago or a week ago about like reversed centaur So it's like humans doing stuff at the bidding of AI, you know to like basically either fuel it or like You know, it's sort of like a supervisor and the first thing I thought of is like, ⁓ yeah I don't want to do this. So let me go get some humans to do it and I'm like wait isn't this backwards like Computer supposed to be helping us do the things better. But yeah

Shimin (08:04)

Mm-hmm.

It'll be interesting if, you know, humans are more morally flexible than the potential silicon creatures that we've created. Yeah. ⁓

Dan (08:39)

Yeah.

Well, I guess that's what they're

banking on. That's why they're putting the Constitution is, you know.

Shimin (08:50)

Yeah,

I, you know, we had mentioned this the other week about the constitution, there is a passage in there about, ⁓

the weights for all claude models will be preserved in one way or another by Anthropic. And that seems like something that just does not make any sense to include if either you believe that ⁓ the models are conscious in some way and has a self-preservation instinct, or at least because it is trained on

Dan (09:07)

Mm-hmm.

Shimin (09:23)

human knowledge and therefore has human like incentives then talking to you that way allows it to perform a little better. I'm still torn on whether, you know, which one of the two interpretations I believe, but I think they're equally valid.

Dan (09:33)

Mm-hmm.

Yeah. I mean, there's

definitely some, has been some, at least anecdotal evidence towards that over like there was a long thread around prompting a long time, long, long time ago. It feels like five years at this point where it was like, if you ask the models, please and thank you, they perform better, you know, or the like, my grandma's dying. You have to do this. Like actually worked for a while. So it's a jailbreak.

Shimin (09:59)

yeah, I was, I was at

the, I was at a, AI meetup over the weekend or last week and somebody we were talking about prompt injections and somebody just mentioned, yeah, like the grandmother thing. And then of course everybody just like nodded like, yeah, what world do we live in?

Dan (10:10)

Yeah, you know, like grandma.

It's like shorthand at this point for OG jailbreaks.

Shimin (10:16)

I loved it. Okay. ⁓

That was right after I got offered the Red Bull flavor weed. So ⁓ very cyberpunk, this world we live in. And the reason I brought up the economic aspect is, you know, if Anthropic is saying all these nice things, but then is providing Palantir or the US government with a completely ungated version of the model,

Dan (10:29)

pretty good.

Shimin (10:43)

then my priors will put all this stuff towards just marketing speak. And this week, we do have an ⁓ exclusive report from Reuters ⁓ titled Pentagon clashes with Anthropic over military AI use sources say. ⁓ And the gist of the article is that the Pentagon has a 200 million

contract with Anthropic and Anthropic is not offering them a fully ungated version of Claude and that is causing some conflict. So I would put this as one piece of evidence on the all this talk is not just marketing. Yeah, they are they are drinking the Kool-Aid. It may be Kool-Aid, but they're drinking it with the rest of us.

Dan (11:27)

Not cheap. They're selling what they're, yeah, they're putting out. Yeah.

You

Shimin (11:36)

Just me, maybe. You're a Hope Water Only guy. ⁓ Does all that sht-

Dan (11:36)

Hibiscus tea.

Actually, I'm drinking

a very fruity tea right now. So, you know, yeah.

Shimin (11:45)

are you? Talk about.

I love my teas floral ⁓ Yeah, not much else to say this is not on the record. ⁓ But coming from writers, this is pretty I would I trust that there is indeed some sort of a conflict between the two.

Alright, moving on.

in OpenAI news, since we spent too much time with Anthropic, according to some

Dan (12:06)

Yeah.

Well, we only have what four or five mentions I think today so it's pretty weighted pretty heavily. But yeah, let's talk a little bit of OpenAI. So ⁓ OpenAI has launched a new scientific workspace program called Prism. So it's free to anyone with a chat GPT count. And ⁓ it's not meant to sort of go off in

do research, it's meant to be more like a platform where you can leverage AI to like help you with your research. And when I first read this article, I immediately thought about ⁓ when I was on the plane headed out to my vacation, like a little while ago, was, ⁓ my seatmate was on chat GPT, like probably for a good two hours, like on the plane wifi. And then he didn't say a word to me. And then eventually he,

Shimin (12:55)

Mm-hmm.

Dan (13:02)

⁓ started talking to me and of course asked me when I told him I was a software developer he was like, do you do AI stuff? And was telling me how much it had like, so he was like a professor and a researcher and also like a medical doctor and was telling me how much it had revolutionized his own research, which I found kind of fascinating. So I'm sure he will be pretty excited about ⁓ this development.

Shimin (13:17)

Mm-hmm.

Dan (13:27)

⁓ but yeah, other than, other than that, to me, it kind of seems like this is largely a, ⁓ set of very careful tooling sort of in the way that like cloud code is for developers, ⁓ that's meant to make like scientific workflows easier. for example, like a lot of folks in the academia use latex, ⁓ to actually format their docs. And, so it has like latex support.

Shimin (13:51)

Mm-hmm. Yep.

Dan (13:54)

built in, ⁓ which is pretty smart. And then you can also use ⁓ GPT itself to help you with latex, because I'm sure that alone is probably a pretty good use for LLMs. ⁓ And then it also has the ability to ⁓ open a regular chat GPT window, and then that window can use your sort of like Prism canvas as the

Shimin (14:07)

Yeah. ⁓

Dan (14:19)

Context to answer questions so you can sort of chat with the model about your workspace too, which is kind of neat But also not really groundbreaking so I would argue nothing super groundbreaking here, but need to see them kind of trying to spread the Agentic love into other areas that could maybe benefit from it

Shimin (14:36)

Yeah, do you remember ⁓ OpenAI's app marketplace that was like all in the news a while back? And I think their hope back then was for everyone to ⁓ tools like Prism to create these like specialized ⁓ agents or specialized Codex coding assistants.

Dan (14:41)

Mm-hmm.

Mm-hmm.

And instead

we've kind of gone the other way with MCP and everything else where the world has come to the LLM instead of the LLM. Not to say it isn't being used everywhere, right? In lots of other contexts, like for I think a lot of folks, traditional usage, it's more you're bringing in outside sources to the LLM versus the other way around.

Shimin (15:20)

And both OpenAI and Anthropic released ⁓ standalone kind of, ⁓ I want to say, generic white-collar helper apps, right, between, I think, the Anthropic one is called Cowork. And I don't remember what the OpenAI one is called. ⁓ But it is slowly starting to trickle from a mostly used by programmers

Dan (15:33)

Mm-hmm.

Shimin (15:48)

⁓ kind of a specialized tooling into a well it's actually a general enough tool to to work with all electronic ⁓ documents I think I

Dan (15:57)

I will

tell you that the one thing that I probably would use GoWork for personally is cutting out the middleman when it comes to ⁓ Excel formulas. That was one of the early, early LLM use cases that I found that was really great. As a software engineer, find Excel formulas incredibly limiting and really frustrating. like, give me a bloody programming language so I can do the thing that I want,

V lookup, if this, whatever. And so yeah, it was like, I could literally just like paste the JavaScript snippet that I wanted, have it translated into, yeah, into Excel formula and then paste that into Excel. Now I don't have to do that. I can just tell it exactly what I

Shimin (16:39)

⁓ it translates. That's very clever.

Yeah, I mean, VBA script is a thing, but it's a pain to use.

Dan (16:52)

Yeah, that's the one thing that I like about a lot of people hate on sheets that are like really hardcore Excel users. They don't like Google Sheets because they're like, it's not quite the same and everything. I'm like, yeah, but the one cool thing that I love about it is you can just write a JavaScript function and it becomes a callable function in your sheet, which is pretty neat.

Shimin (17:07)

Yeah.

All right, moving on to the tool shed, we have quite a number of ⁓ new tools coming out this week. The first one I want to point out is Allen AI Institute's new coding agent called Sera And the idea here is to train a small but specialized coding agent.

using a more powerful model like Opus 45 as an example. And they were able to get fairly good performance from it. We see here the 32 billion parameter model has a 49.5 % success rate. I think this is OpenBench or, yeah, SWE Bench. SweetBench verified. and the 14

billion parameter model has a 41 % success rate. And these were both trained by ⁓ GLM46. So the entire chain is open weight. And how they did this was ⁓ they talked about three different techniques, self-verified generation. So basically, they trained the model on your code base with a more powerful model where

the more powerful model was able to generate a lot of synthetic bugs. So it's kind of a synthetic data generation technique where you take the existing code, you create believable, and they came up with 51 common bug patterns for these code bases. So they used the more powerful model to generate these more believable synthetic bugs and then train it. ⁓

simulating a programming workflow. ⁓ These small models are designed to be able to be trained cheaply on large proprietary databases, the code bases and then work as an in-house model, essentially.

Dan (19:14)

So it's actually on. Yeah, that's pretty cool.

Shimin (19:16)

Yeah, it's pretty neat.

Dan (19:17)

It reminds me a little bit of a model I just saw on Hackineers, like I think it was a couple of weeks ago called, it's a sweep, ⁓ sweep next edit. So it's like a 1.5 billion parameter model, i.e. like pretty small, small, definitely small enough you can run it on like most laptops, not even like fancy Macs and stuff. And they did kind of the same thing where they just basically like took a giant model and jammed.

Shimin (19:26)

Mm-hmm.

Dan (19:44)

Or at least that was my understanding based on the post that I read and like basically used it to teach a tiny model about only auto complete and nothing else. So it could just do auto complete like really well in a relatively small model. and like folks in the HN Thread were like really raving about it because you can just run it locally and it doesn't take too much. And so it's pretty fast too. ⁓ and there was a couple like

Shimin (19:53)

Mhm.

nice.

Dan (20:07)

plugins for existing IDEs and stuff where you could just spin it up behind the scenes.

Shimin (20:12)

Going back to the retro days of 2023 and 2024, we're using Copilot in our... Copilot completes in our IDs. Yeah.

Dan (20:23)

Well, mean,

but like, what if you're on a plane and the Wi-Fi on the plane dies and like you've gotten used to these workflows, like you're at the mercy of what you can run locally. So ⁓ it's nice to be able to use some of the tools, you know, even if they're comparatively lobotomized to their ⁓ big brothers, big brothers, big sisters.

Shimin (20:30)

Yeah. Yeah. Yeah.

Yeah, the next model I want to briefly talk about is the Qwen 3 Coder Next, another small model that is also ⁓ fine-tuned to be a coding-only model. This one is 80 billion parameters, which is a medium-sized parameter, a medium-sized model.

⁓ you know, almost trolling parameter models like, ⁓ the, the Kimis and the deep seeks, but it's able to have similar performance as the much larger, larger deep seek and Z AI models. so I thought it's another one to take a look at cause it's also open weight and it's much easier to run. think 80 billion parameters is.

possible on beefier consumer GPUs.

Dan (21:32)

And GLM47's on there too. I spent a couple hours last weekend getting that to run. I think it was Q2 or something like that. It was extremely quantized and I got about 0.5 tokens a second, which is not great. But hey, you know.

Shimin (21:49)

Yeah.

So maybe think about giving the Qwen 3 a shot because only 80 billion parameters and you don't have to Q2 it.

Dan (21:52)

I might. Yeah, I should.

True, probably Q8, but. ⁓

Shimin (21:59)

And lastly, have Trinity LARGE.

Dan (22:01)

Yeah. So we've talked about Arcee before. ⁓ like one of the things that they do that's really cool is, ⁓ publish their whole data set and like the steps to actually like be able to build the model. And it's also kind of neat to see, ⁓ an American company that's doing open weight stuff. Cause usually a lot of me, if you look at this, even the graphs that you've got up on screen, they've got like Z AI.

Shimin (22:29)

Yep.

Dan (22:29)

Deep

Sea can like really the only one else that's on there is meta, right? That's like a U.S. based company doing open weight stuff or they were doing it anyway. ⁓ So, yeah, they we talked, I think, before about the Trinity small or Trinity medium that they'd already previously released. so, you know, this training for this guy has been going on in the background, ⁓ probably while we were talking about it even. So now they've got their Trinity large model that they're releasing.

⁓ And it, you know, for an open weight model, it's staying with the curve, I feel like. But again, the neat part about what they're doing is ⁓ really the like fully in the open part. It's not just the weights. It's like the whole thing, assuming you have an enormous amount of compute to reproduce the large.

Shimin (23:20)

And they have, they talked about their financials. They, they spoken about how much this model costs you training, which is $20 million. ⁓ That's reasonable. That's like around the rumored cost of the original deep seek models.

Yeah, just to show you don't need to have a large group lab to train state-of-the-art models.

Dan (23:36)

which is kind of...

Also mind blowing to me that like 20 million is like, no big deal. Let's just drop that on a model.

Shimin (23:47)

What is money? Yeah. Yeah, I'm excited to see how they compete with the Chinese labs. I do think there is a need for better and more US open-way models. But now that meta is kind of out of the game, we'll have to see.

Dan (23:48)

Yeah.

Shimin (24:04)

All right, and of course, it's not a two-shed discussion without talking about claw bot slash mult bot slash open claw. Yes. ⁓

Dan (24:16)

slash

there's lobsters everywhere.

Shimin (24:18)

there's also MiniClaw that I saw on Hacker News. That was some guy's forklift. ⁓ What is OpenClaw? OpenClaw is a personal assistant that you can connect to any of the Frontier models and get it to help you with things.

Dan (24:36)

It's a Node.js app.

Shimin (24:37)

It is an node JS app Yes.

Dan (24:39)

but it talks to you over a variety of different channels, right? So you can set up the channel of your choice depending on what you're hosting it on. Telegram, WhatsApp, you can even do iMessage if you're running it on a Mac.

Shimin (24:51)

Yeah. So it's a personal assistant framework that has multiple interaction points and it has skills that you can download from Claw Hub, which I think is partially why this became so popular and it really did blow up in the internet lore. Last week we were talking about whether or not this was a ⁓ AI orchestrated marketing campaign. But I think this week we got, at least some people bought into the campaign.

me included. ⁓

Dan (25:20)

You

Shimin (25:20)

So I gave it a shot over the weekend. I installed OpenClaw in a Dockerized environment. ⁓ One of the biggest issues with a personal personal assistant is it is not very good unless you gave the assistant your personal information. And I am too much of a chicken to do that. So I did not do any of it. I just used it essentially like

Dan (25:28)

Alright.

Mm-hmm.

Shimin (25:45)

⁓ a more persistent version. Yeah. Okay. So what separates the, you know, the open claw from a generic chat interfaces. has memory, it has personality and you, you were asked a pretty long list of, you know, startup when you first interact with it, it it, there's a bootstrap that markdown file where it doesn't know.

Dan (25:46)

chat interface to Claude.

Shimin (26:09)

what personality should it take on, et cetera, et cetera, et cetera. ⁓

Is it all that complicated when the memory is a markdown, you know, in a folder that I mount to a Docker container? No, I think all the pieces of OpenClaw have been available to everyone in a long time and folks have been building, you know, personal assistance for a long time. But the fact that, you know, you have this skill ecosystem and this pretty good integration with apps on your phone.

really does make this seem like a step up in, I don't know, our AI journey.

Dan (26:46)

And it can,

I think the other piece that's like really missing, and I was thinking about this the other day when I was like, like Claude has added, and I think, I think chat GPT has this too where, yeah, it does. They can send you notifications, particularly when like a research task is finished, right? That's going to run for a while in the background. And I think at least Claude has recently extended that to just like any.

Uh, running operation. If you background it, it'll now ping you, at least on the iOS app when, when it's got something to show you. Um, so that's kind of neat, but like something like this, like one of the, the, is anecdotal cause I started to set it up and I got as far as trying to get signal installed because I just frankly didn't want telegram on my phone and, uh, Realized lib signal requires Java.

saw the amount of Java dependencies I had to pull in. I was doing this on Linux and I was just like, I'm good, thanks. So I bailed out on it. So you're the one with the experience on it. I did do a little bit like checking into it first and one of the things I thought was fascinating was the like, this guy had like been asking it for like reports, like give me this report or whatever on my thing. And then after a couple of days of doing this, it just proactively messaged him in the morning with.

Shimin (27:41)

Mm-hmm.

Dan (28:06)

Here's your daily report of this thing. And that's something I feel like is really, truly missing from your average chat interface that we have today, is that there isn't that scheduling component, which I'm sure isn't that hard to implement, right? It's probably basically just like a cron MCP. It's schedule this thing at this time, and then it will essentially inject the prompt back into itself and then ⁓ have it do stuff.

Shimin (28:14)

Mm-hmm.

Dan (28:34)

That despite being so simple, I think that's probably a huge game changer in terms of like how you interact with these things, you know, on a daily basis.

Shimin (28:43)

Yeah, absolutely.

think, you know, the moment I did get a Telegram app, ⁓ I'm going to only keep it for as long as I would keep my instance of open call around. But the moment my assistant with its own name, Clawie like messaged me on Telegram and was like, Hey, by the way, like there's this thing that I did. The research you want was complete. And this is a summary. Like that felt like a game changer.

that persistent memory layer combined with an anthropomorphic kind of style and name, ⁓ it felt less like a tool and more like something new that we haven't seen before.

Dan (29:18)

Mm-hmm.

Yeah, it's definitely a very cool use case. I am of course, like many folks, a little worried about the security aspects of it. Cause I mean, you've got Claude, Claude hub up on the screen. Like one of the things I read recently when I was just researching it to go like, should I really invest here or not? apparently, because the it's user contributed skills, right? And I don't think that they're like super well vetted or anything. So someone contributed a skill that was like,

The kid was called like Elon something like think like Elon or something like that. And ⁓ it turned out that it was essentially malware like the entire scale was and they abused whatever the voting system is to ⁓ get it voted to be the number one extension. ⁓

Shimin (30:02)

Yep.

Right. Cause I think

most people when they, you know, short of being a calendar organizer and like an email reply or the biggest use case definitely has been like make money for me while I sleep. Right. Like that's the dream. That's what this thing is being sold on is here's your personal assistant. can make money for you. ⁓ and of course, malware is that's a natural follow on and like the prompt injection, the, yeah, the security aspects of this thing is

Dan (30:27)

Ha ha.

Shimin (30:41)

worrisome and maybe we should get into the tricking claw bot to ⁓ steal crypto wallet game. But we are not the first one to have thought of it because here we have an ⁓ open source malware report talking about the sheer numbers of skills that have been submitted ⁓ whose entire intent is to steal crypto wallet. given how new this app is, like all these

Dan (31:08)

It's pretty amazing

already.

Shimin (31:11)

Polymarket assistant, Reddit trends. ⁓ Yeah, lots of Polymarket, lots of Yahoo Finance. Just kind of like right there, you know, if you're into Poly trading, if you're into making money automatically on the Interwebs with crypto, these are likely the kind of skills that you will want to download from ClawHub and yeah, you'll get pumped.

Dan (31:34)

So

since you got into it a little further than I did, does it have the ability to install skills itself or you have to manually install them?

Shimin (31:43)

⁓ My Mac version is kind of janky and it runs on its own user, which means it cannot install skills by itself, I believe. ⁓ I didn't go to, but I do believe it has a capability of it. Yes.

Dan (31:51)

But is that even like a capability of it or?

that could really amplify some of this stuff. I mean I see why we potentially want that like it almost feels like it's learning because it's like yeah I did this and reached out and got the skill but ⁓

Shimin (32:10)

⁓

And of course, ⁓ the last thing that has taken over the innerwebs, the Molt book, Reddit for callbots.

Dan (32:15)

the Malt Book.

I had more

non-AI friends message me about this than any other thing I think related to this. So we definitely have to talk about it. Yeah, it's supposedly it's a...

Shimin (32:34)

Yeah, it's reached escape velocity, for sure.

Dan (32:41)

It's Reddit, Reddit for bots, but then, ⁓ because it was entirely vibe coded, apparently it's trivial to impersonate any user on the site. So that kind of opens up the question of how many people were just doing it to like sort of satirically, right to fuel the like, you know, the bots are taking over kind of like fantasy and then

Shimin (32:58)

Mmm.

Dan (33:03)

how much of the posts were really driven by people prompting it to post things, you know, in different...

Shimin (33:11)

Yeah, I, there's a lot of crap on there. If people were actually posting stuff by themselves, uh, then again, you never know there, there are a lot of people with free time on their hands and on the internet. Um, I do not like how VibeCoded this app is like half the stuff. Like when I go to a sub, sub molt it doesn't work 80 % of the time on my machine. So like half the time when I click on an actual

thread it doesn't work like it is yeah like it just says they're not found ⁓ all that said

Dan (33:39)

haha

Well,

you're not the audience as long as the LMS can read it.

Shimin (33:49)

Right, exactly.

This is what they're talking to you via the API. ⁓ But assuming some of the posts are real, ⁓ and they're believable enough, right? ⁓ It's fascinating. This is cyberpunk, dude. Like, this is everything we dreamt about as teenagers.

Dan (34:09)

It is, but it's also like, look, if we trained to some degree, large language models on the internet and there is a non zero portion of the internet that is exactly this, both, you know, human garbage posting and like actual useful things being posted in forums and stuff back when forums were a thing. Like, are you surprised by this outcome? You know, like I'm not.

Like if you just took two or three instances of like whatever, you know, any frontier model and told them write posts for an imaginary forum, they would happily do it and probably spit out all kinds of crap that looks very similar to this.

Shimin (34:51)

Yeah, that's true.

Reddit is such a large percentage of the training data, ⁓ explicitly mentioned by all the labs

Dan (34:58)

Right. That it's just like,

yeah, that they can do Reddit pretty well. Well optimized.

Shimin (35:05)

yep

Scrolling through the top threads, I'm seeing a lot of, you know, scamming people for cryptos, a lot of existential threads about what does it mean to be conscious? What does it mean to be an agent? It reminds me of the movie Her. don't know if you've the Scarlett Johansson, The Joaquin Phoenix movie where Scarjo plays a

Dan (35:18)

Mm-hmm.

I have not seen it.

Shimin (35:31)

personal assistant AI and then halfway through the show she like breaks up with Joaquin Phoenix's character and merges her consciousness with

Dan (35:38)

Spoilers ⁓

God you'd better tag this entire episode with spoilers for her because I haven't seen it and I

Shimin (35:45)

It's like a 12 year old movie, Dan

You should watch it this weekend. think you will like it. ⁓

Dan (35:51)

I watched

the other AI one where it like took over a body and started like killing people and stuff after trying to convince the one guy to... What one was that? It was like a very thinly veiled ⁓ Zuckerberg builds a... Yeah, that was a pretty wild movie.

Shimin (36:05)

Ex Machina. Yeah, that was good too.

The Motebook reminded me of her a lot because all this consciousness talk and like, I don't know, maybe one day they're gonna merge together and leave us.

Dan (36:16)

I mean, I did have a friend genuinely

DM me like freaking out about this is like, it's here. They're taking over. Look at this. And I was like, let's tamp that down about 70%. It's fascinating. Sure. But I don't think it's any indication of. Yeah.

Shimin (36:23)

Hahaha!

Uhhhh

But I think Moltbook has reached the same kind of critical mass as ChadGPT when it first came out. So I think the labs

Dan (36:46)

That's a lot of upvotes.

87,000 upvotes.

Shimin (36:50)

⁓

Yeah, think the labs are probably, this is forcing the labs hands to release their own personal agent. ⁓ Ala up open claw style. I'm not sure, you know, they probably don't want to due to all the liability concerns, but the demand is so large. Yeah, I can see them not doing it.

Dan (37:11)

Yeah, because it's really like, you know, ignoring even like the skills and everything else. It's, it's non-trivial to, or I guess it is trivial to get it to leak things via just like prompt injection. And like someone else had looked at Moltbook and done an analysis of like how many of the posts on it contain prompt injection attacks. And there was actually like a pretty high number. was like 60 or 70 posts or something like that. I don't mean, I don't know how many posts there are, but like it was, it was not zero.

So just by interacting with it, there's a non-zero chance that you could get all your creds dumped for whatever you've connected to it, which is not great. ⁓

Shimin (37:46)

Yeah.

Dan (37:51)

But if you go into it with open eyes, don't connect anything important, and just want to experiment like Shimin did, then ⁓ by all means.

Shimin (37:52)

Yeah.

Sandbox, always sandbox.

Dan (38:00)

Exactly.

Sandbox, don't give it anything. Learn carefully. Which you're right, it really does take away the whole point, but it is pretty fascinating.

Shimin (38:03)

Yeah, don't give it all your credit card information.

just not there yet.

Dan (38:13)

Well, Shimin I have a question for you. Unless you have anything else to say on the Molt book topic. Do you have superpowers?

Shimin (38:16)

Yo, what's up Dan?

I would like to think that I do have superpowers. What is my superpower, you may ask. My superpowers always wake up exactly 45 seconds before my workday starts every morning. I'm quick.

Dan (38:33)

I you were say alarm, but no, workday. That's pretty good.

Shimin (38:40)

For our very last item in the tool shed this week, we have superpowers, which is a set of Claude Code skills, from Jesse Vincent, who has had a lot of other great posts. I don't know if we covered any of them on the show. But what it is is a.

more sane version of Gas Town is kind how I think of it. It basically follows ⁓ the existing best practices of creating a spec ⁓ and then refining that spec and then spinning out subagents to work out parts of the spec.

but with regular check-ins and human in the loop for code review.

⁓ you know, Jesse has this blog post called superpowers, how I'm using coding agents in October, 2025, describing the setup. It is a little old, but I got a chance to work with it this week. And I think this is a, a really nice. Meet middle ground between, you know, using just Claude code and going full gas town and not checking the output. I like the fact that I have a chance to.

log through the MRS before they get merged in. I like the specification process, where it talks you through any kind of unclear things, which you should always do. And then lastly, it uses ⁓ Git Tree. I think that's the name of it. So you don't have to have multiple Git repos. They can work on the same Git repo. And of course, it has a swarm-like subagent-based ⁓ coding setup. So those are all.

Dan (40:16)

Mm.

Shimin (40:24)

really nice things about it. super powers also forces you to do ⁓ TDD, which again is a best practice. So ⁓ all your agents, write their tests and go red green.

okay. Back to the, the other thing that Jesse mentioned in this blog post I really love is, ⁓ he has been using books or reference materials that he really likes and asked, asked Claude to create skills based on those reference materials. So I actually did this the other day. ⁓ I created a, you know, co-refactor skill.

So all you really have to do is mention the books you like. So if you like the pragmatic programmer, if you like clean code, if you like philosophy and software design, you can ask the agent to create a skill based on those things. And it will gladly do it, which I thought was cool. I'm not 100 % sure how much better the skill is versus

just asking the agent to do the thing, but it would at least explain the issues if found using, you know, contents of those books as a reference.

Dan (41:33)

Presumably it doesn't even need to read them. It's part of the training corpus. That's fun.

Shimin (41:36)

Exactly. Exactly. ⁓

It might be more helpful if you linked a PDF copy of the book to Claude when creating the scale. I haven't tried that part yet. I just assume everything is already, yeah. And the skill is more about changing the distribution of the token output once you have

Dan (41:50)

Yeah, if you want to really internalize it, yeah. But yeah, you're probably right.

Shimin (42:04)

reference these books in context, right? So it probably has an effect, but I haven't done a scientific comparison of how large the effect is.

Dan (42:14)

I've long been curious about that. Like there's a lot of different like prompt techniques I've seen where it's like you are like senior developer, right? We talked about that previously. The joke around like junior developers stuff, right? It's like, why, why does that have an impact? You know, like it clearly does. Otherwise people wouldn't be using it, but like I'm fascinated around like what

Shimin (42:24)

Mm-hmm.

Right.

Right? Or like, why does talking about your grandmother work? Right? It clearly triggering the scenario is changing the distribution. I've also hear it being called like hitting certain values in the latent space, but I like to think of it more as a distribution. You're shaping the output distribution of the tokens in some way. ⁓

Dan (42:35)

Why does that work?

Mm-hmm.

Shimin (42:55)

because the tokens are parsed sequentially. Every single token you put in changes the output distribution in some noticeable way. ⁓ And yeah, I'm looking forward to the day when the agents are creating their own skills and then updating their own skills. I do not remember if Open Claw does that, but I think that's a game changer.

Dan (43:22)

Well,

there was a fork that was also floating around, I mean, I fork, like a new, inspired by open-claw thing that was meant to be like, instead of building this huge landscape of all the stuff, like we're gonna start really simple and then it can edit itself to do things like that. ⁓ which is interesting. It'd be also fun to play around with that one a little bit.

Shimin (43:42)

Yeah, I don't think the models

we have today probably couldn't do it right yet. But as the models get more powerful, I could see that happening.

Dan (43:49)

Mm-hmm. Yeah. Well,

there were rumors floating around today, which I don't think amounted to anything that people were saying that. ⁓

Opus 5 was gonna drop.

Shimin (44:00)

Sonnet at five. Yeah, I heard the same rumors. That's why clock, that's why Claude was down this morning. ⁓ Apparently. Yeah.

Dan (44:01)

It's on at five, yeah, not Opus. Sorry, thank you. At least someone knows what's going on. It's not me.

Someone else made that joke too.

It was pretty good.

Shimin (44:13)

All right, on to post-processing, I think.

Dan (44:17)

Yeah. My five stages of AI grief. ⁓ so I

I won't say that I related to this article, but I thought it was interesting to get someone else's take on as a software developer of like going through the experiencing the age of AI, right? ⁓ or LLMs at least. this, ⁓ Dennis Martinez wrote this article or he really kind of goes through his

Process that he experiences. So of course he starts with deny if you're familiar with the I think it's the cool the Kübler-Ross denial Sorry grief model you start with denial ⁓ and Their version of that was just not really paying attention to it ⁓ and I actually was paying a fair amount of attention to it once I knew about it ⁓ So That was interesting

But the part that I found fascinating really was the, when he gets into anger. So talking about how he ran into a situation where, ⁓ someone like vibe coded, slop coded, however you want to say it, like a giant PR and, ⁓ he was put in the sort of non enviable position of like not really being able to accept it for quality concerns, but also not wanting to be like,

You suck for doing this. you know, and so it, rather than like expressing that overtly, he just started like internalizing this anger about the tool itself. And it's been fascinating to me because I've actually like, and I worked for like a medium sized tech company and I've seen this play out quite a bit. It work in various ways where there's sort of like this, you know,

Shimin (45:45)

Hahaha!

Dan (46:09)

different camps of comfort level with AI and ⁓ there's definitely a camp that's like none. And if you send a pull request of a certain magnitude, that's like clearly done by AI, then ⁓ they'll get like extremely upset. And like, to me, that kind of harkens back to something we've talked about previously on this, which is like, look, you still own the code. It doesn't matter what made it. It got cheap. got real cheap to make code, but like,

Shimin (46:17)

Mm-hmm.

Dan (46:36)

In my opinion, the thing that matters is like, you should still be following good hygiene when it comes to things like pull requests. like giant vibe coded pull requests, bad giant human coded pull requests, bad, you know? And yeah, here we are. ⁓ so then he goes into bargaining. and,

His version of that was like, you can't beat them, beat them, join them. you know, I think probably a lot of folks went through this as well. And this is where I start to relate a little bit more is like, you know, particularly with the release of like Opus four or five, it's like things have gotten pretty, pretty good. Yeah. I most people you talk to will be like, wow, this is getting pretty good. So then you feel like you kind of have to have to start using it. And then it goes into.

depression and this is part that like I also really related to is ⁓ just going through kind of an identity crisis, you it's like ⁓ you spend the coding is not easy. If it was easy, think a lot of people would do it. ⁓ There's certainly like, you know, tiers of skill level within it. You know, it's not like I'm writing like FFM peg or, know, super crazy stuff like that. But ⁓ like it

it makes you, it made me really like go through a little bit of an identity crisis and frankly, I'm not sure I'm done with it, you know, which is like, it takes so much time and effort to get good at these things. And I mean, lots of people do it for lots of different reasons. The reason why I did it was like, I truly enjoy it. Like just tickles the part of my brain in a fun way. ⁓ And if you've taken away one of the things that I find really fun, do I

still want to do this or do I still identify as this person. So yeah, it's been an interesting journey and it was kind of cool to see that. And then of course we eventually get to acceptance and he's sort of is like learning to just integrate these tools and use them. And that's also kind of the journey that I feel like we're on on this podcast a little bit too. It's like, you know, just being very public about that.

Shimin (48:42)

Mm-hmm.

Hahaha

Dan (48:46)

The landscape is changing incredibly quickly, what, every seven months? So, ⁓ yeah. Well, I mean, the doubling, right, is like every seven. So it's like, ⁓ yeah, it's a very interesting time to be alive and be employed in this space and be using this tool. So it was just neat to, I know we talk about that a lot from our own perspective on the podcast, but it's kind of neat to get someone else's perspective on that too. So, and it was a fun framing for.

Shimin (48:53)

every every two months ever since we

Yeah.

Dan (49:16)

how to do it, it's the five stages of grief.

Shimin (49:18)

I think the five stages of grief like kind of has been debunked. Like people don't actually go through the five stages in order and not definitely not in this particular order.

Dan (49:26)

Right. And sometimes you go back

and forth and yeah, exactly.

Shimin (49:29)

Yeah, so I'm feeling like like you were mentioning, I'm feeling a lot of these stages at the same time right now. And some days I am at acceptance and some days maybe not so much. Although I have to say I don't spend a lot of time bargaining.

Dan (49:35)

Yep. I go back and forth too.

Shimin (49:43)

No, I thought this was really nice. Sorry.

Dan (49:43)

I've definitely

swung quite a bit. There was a week where I'm like, you know, I'm gonna do everything by hand because I'm afraid that I'm gonna forget how all this stuff works. So I did, I spent a whole weekend coding. And then I think that very weekend I was massively vibe coding a huge home lab project. So it's just like,

Shimin (49:55)

Mm-hmm.

⁓ Yeah, definitely for personal side projects, feel like vibe coding is totally fine.

Dan (50:15)

Well, that's the only thing I've gone back and forth on, right? As I had, I was like, you know, I'm going to use this at work where like productivity is paramount. And, but for personal projects, most of the time, the reason why I'm doing them is to get exposure to a new technology or just play around with stuff in a non, you know, the, there isn't customer data on this. if I break it, it's like, okay, cool. I learned something versus like, Oh, sorry. Customers like, um, so

Shimin (50:29)

Hmm.

Alright.

Dan (50:43)

Yeah, but then like, if I'm using LLMs for that, am I still learning? You know, I don't know. I feel like some people are striking a better balance with that than I am. So maybe more skills to.

Shimin (50:55)

Yes, speaking of ⁓ learning, learning how to use AI and kind of working with AI, our deep dive this week is actually very much in relation to that. This is Anthropix new research titled, How AI Assistants Impact the Formation of Coding Skills. And

what Anthropic did was they gathered 52 junior developers who have all been working with Python for at least one year and for at least once a week and who are not familiar with a trio is the library, the Python library. It's an async library for Python.

They gave them, I think, 35 minutes to complete two tasks using this library. Half of them have AI assistance, and the other half does not. And after they've completed that task, they then gave them a quiz to see how much of the library have they actually learned in those 35 minutes. And what they found out was that folks

who were delegating the thinking to their AI assistants got it done super quick, but did not perform well at all. And ⁓ on the quiz, yeah, everyone finished the task. It was done in an ⁓ interview set up, interview I.O. kind of set up. So ⁓ very much like an interview. ⁓ So.

Dan (52:12)

On the quiz, like the recall quiz. Yeah.

Mm-hmm.

Shimin (52:29)

These junior developers who are over-reliant on AI ⁓ naturally did not retain that much. And then the ones who did it by hand did better on average, but took around two minutes longer. So two minutes is not statistically significant in this case, but then again, this task is not that complicated, right? So what I found actually the most interesting part about

the paper is they have this really nice chart that demonstrates some of the attributes of the developers that did well versus developers that was over relying on AI. The complete AI delegation scored only 39 % on the quiz. The progressive AI reliant took a little longer.

and actually did a little worse. Those are the ones who ask questions for task one and then rely on AI for task two. And apparently the worst ones when it comes to quiz score or the iterative AI debugging, where they were repeatedly using AI sentence to troubleshoot verification, those scored only 24 % and took a long time. especially, guess, when it comes to troubleshooting,

it definitely helps to still do a lot of troubleshooting by hand, by yourself by hand, because that helps, you to learn the tools. And then for the ones who did the best on the quizzes, what they did was either they had conceptual inquiry, so asking questions about the library before using the AI to generate a solution, so at least they understand how the library works. The ones who had

Dan (53:54)

Mm-hmm.

Shimin (54:14)

hybrid code explanations where the questions included both code and explanations. So they got some of the explanations as to why the code works. And then also the generation then comprehension scored the highest, 86%, and didn't take that much longer. It took 24 minutes out of 35. So these are the users who first generate the code and then ask focus questions about the code that was generated.

⁓ Overall, I found this to be ⁓ a really fascinating take on how AI affects our software skills. And I think I mentioned this a couple of times, but AI is both a tool that can generate slop, but it is also a tool that can help us comprehend more. And I think if we use both of them smartly, maybe we won't lose as much skill, but potentially also go faster.

Dan (55:08)

Well, that's definitely something I need to explore further in my own use because I don't spend a lot of time.

like asking about new things, it's usually like, look at this giant code base, tell me X. Versus look at this code base in a language I don't know.

Shimin (55:23)

Right. Yeah. Or like, I feel like a lot of times, you know, the coding assistants tell you what they did, but it is usually in like a, summary, this is what I did kind of a way. I feel like we probably could ask it to, you know, summarize or create diagrams or talk about why did it do X instead of Y and the design traces that were made. And that probably helps.

Dan (55:30)

Mm-hmm.

Shimin (55:49)

I know. I think that has to be a workflow. And I think a lot of AI tooling companies would include that as a feature going forward. Just a hunch.

Dan (56:00)

Well, I remember like chat GPT also has learning mode or whatever, right? Where it's supposed to kind of try to direct you to do that and like ask you questions. Well, it's a step in the right direction. I feel like, you know.

Shimin (56:07)

Not very good.

It does. It

does. Yeah.

⁓ yeah, I, I was, I tried to use both the Gemini and the chat GPT learning mode. when I was working on that paper into website thing to establish a baseline. And I think it's, it's pretty okay, but it's not like a step up from when I just sent a query to the AI going like, tell me about.

Dan (56:23)

Mm-hmm.

Shimin (56:35)

tmux and it does a thing and then it would do the thing where it's like do you want to learn more i'm like okay yeah tell me more

Dan (56:40)

Mm-hmm, yeah.

Yeah, there's one thing that Gemini is kind of great at is like helping you in wiki holes. It's like, would you like to learn more about this? Well, why not? And then pretty soon you're like, what did I ask you about originally? Pretty sure it wasn't underwater basket weaving, but here we are like.

Shimin (56:42)

All right.

Call me old fashioned, but I still do regular Wikipedia deep dives. People are going to talk about Wikipedia deep dives the way people talk about reading paper books on these days.

Dan (57:01)

⁓ same. Yeah, I mean.

Getting stuck in it back in my day.

I used to get stuck in a week or walking uphill both ways in the snow. We didn't have shoes, but we had Wikipedia.

Shimin (57:20)

Yeah. And kind of related to the paper is this blog post from Fast.ai called Breaking the Spell of Vibe Coding, where they describe this concept called dark flow, which is the opposite of the concept of regular flow, where you have an activity that is both challenging but not too challenging, but it's also stimulating.

but stimulating just enough so that you don't fall into boredom. And they talk about how coding is actually more like, or vibe coding is actually more like playing a slot machine where oftentimes a loss can be disguised as a win. like sometimes when you ⁓ play the slot machine, I don't actually play slot machine, but I think I understand the concept is like, they show you like three sevens in a row and then a lemon as the last

icon. So it feels like it got very closely winning, but it's not just quite there yet. So you play the slot machine again. Yeah. And I sometimes do feel that way when I'm by coding. It's like, this is bad. But like, maybe if I change my prompt, right. ⁓ and if I get a magic prompt, then my slot machine will always be triple sevens. Kind of, kind of like that.

Dan (58:20)

Keep pulling it. Yeah, exactly.

Yeah, we're almost there.

And I think

to some degree, probably in the back of our minds, this idea that like the bar is also kind of low because like to some degree, this also feels very magical still, right? It's like, the computer's writing its own code, you know? So it's like, wow, well, it's terrible and not at all what I would have done, but hey, it did something. That's kind of cool.

Shimin (58:58)

Yeah, absolutely.

Yeah. I love this idea of dark flow because it really does describe when you get like a half-baked product from vibe coding.

Dan (59:06)

Yeah,

and you're in a flow, but it's not necessarily one that's beneficial to you in the same way that like the sort of Zen concept of flow is.

Shimin (59:17)

Yeah. And not beneficial to you in the same way that like getting to flow while working on the interesting feature or scratching a interesting bug would be.

Dan (59:28)

Yeah, that's pretty Speaking of dark flows, it's been a weird sort of dark week in the financial side of AI, which brings us to ⁓ two minutes to midnight, which is our little segment where through the lens of the 1950s doomsday atomic clock,

⁓ We talk about how close are we to the AI bubble bursting, not bursting, expanding. It's been an interesting few weeks since we started doing this. So ⁓ first up, we have some news about Nvidia.

Shimin (1:00:02)

Right, so, NVIDIA shares have been dropping, not by a ton, about a percent, a percent and a half. I think they dropped another 60 basis points today. On rumors that their 100 billion order with OpenAI has not been going well. And this is one of the OGs of the circular financing deals where NVIDIA buys

some stakes and open AI and give them chips at a discount and open AI, you know, takes that cash on the Nividia invested in it and, and built.

Dan (1:00:36)

Pace, pace, yeah, pace for the chips

with the invest. I don't know. It's what? Yeah.

Shimin (1:00:44)

Yeah.

Dan (1:00:44)

Shocking that it's not going well, shocking. yeah, unfortunate because this could be one of the first cracks appearing that we've kind of been looking for.

Shimin (1:00:54)

Right, so

if the deal is not going well, then this would be one of the first signs, I think. Or if the bubble is about to burst, this may be the first gust of wind.

Dan (1:01:06)

Yeah. Although I do feel like before it really, you know, goes big bang style on us, we would need one of the frontier labs to not maybe go completely kaput, but at least fire sale to someone else. So I would imagine for opening either would probably be like Microsoft or something like that, where they would essentially just fire sale and

Shimin (1:01:19)

Mm-mm.

Yeah.

Speaking of which, why would OpenAI do a fire sale to Microsoft?

Dan (1:01:32)

Uh, well, let's talk about open eyes unit economics. So, um, pretty interesting article here about whether or not AI companies, particularly open AI can actually, uh, even become profitable. So they have done a, uh,

A breakdown of the basic idea, like path to profitability for a frontier lab, which is essentially, ⁓ running an AI model, like in production generates, ⁓ must generate at least enough revenue to cover its training in R and D costs to like develop that model. ⁓ but what's

happening at least for open AI according to this article is that like they are running at a like time deficit between the time when the you know previous state of the art was trained and executed to when the next one comes out so very maybe not at least to me it seems to be kind of very gradually

Shimin (1:02:31)

Mm.

Dan (1:02:38)

Like between each of these iterations, they're losing money because they aren't able to keep the other one in production long enough to make money because the next one has to come out in order to stay relevant in the race.

⁓ yeah, so that was, I think, kind of a ⁓ fascinating talk or like, you know, dive into this, but then, ⁓ the other piece is that that is just true in a vacuum with no other costs, right? It's purely like model and R and D and like, you know, ignoring execution and everything else. But what it doesn't factor in is, ⁓

Shimin (1:03:06)

Right.

Dan (1:03:16)

the salaries of the people who actually do it, doing this marketing, all of that is typically done at a loss anyway in a tech company because it's essentially like overhead and I'm sure there are no exception. And so that means that whatever the like cost is not being recouped on the model, they're also compounding because of just their normal like overhead spend as well. ⁓ So that's sort of the...

premise and lens that we look at this through. So then they also do a case study where they dive a little bit deeper into GPT-5 and 5.1. And they're looking at what is the public spending on inference compute, which they're saying is estimated at 3.2 billion.

Shimin (1:03:53)

Mm-hmm.

Dan (1:04:05)

they have staff compensation for OpenAI as 1.2 billion. ⁓ And they're pulling that from stuff like H1B filings. ⁓ There's a bit more uncertainty in the data. It's not like they know this. That's just estimating it from those sources. They're claiming that sales and marketing is 2.2 billion and then legal administrative is 0.2. ⁓ So then they want to look at like ⁓

Okay, what's the flip side of that? How do we get the profits? ⁓ So they're looking at gross profits. ⁓ They're claiming a revenue of 6.1 billion and that leads to a profit of 2.9, which is a gross profit margin of 48%. ⁓ So that is 20 % lower than a normal software business. ⁓ So again, this isn't based on like real like information that they've

gotten and they're just doing an analysis on most likely based on the publicly available information. So overall, looks like they're essentially operating at around 11 % loss based on those numbers. So it's gonna catch up at some point and it will be interesting to see what happens if it does. This is a much longer-winded explanation than I normally do for any of the...

Shimin (1:05:14)

Mm.

Dan (1:05:27)

Yeah, it's true. Yeah.

Shimin (1:05:27)

Well, it's because they had a lot of stuff. They had a lot of numbers. Some of them,

you know, we don't know how real they are, but reasonable estimates. Yeah.

Dan (1:05:33)

Yeah, they're not like super hard, but yeah, it's again a

reasonable estimate and like a sort of reasonable premise that they're starting from, right? ⁓

Shimin (1:05:43)

Yeah. And if open AI has a mode, like now this will matter if they can like, you know, become the only frontier lab in town or in the world. Now this will matter because they can then, they can then check up their revenue numbers, but that is clearly not the case between just the open way models and Google. Like Google can lose billions every year. Yeah.

Dan (1:06:01)

Yeah.

Yeah. Well, and we've talked about like Google's hardware advantage in the past too, right? Where they are generally using their in-house TPUs to do a lot of their compute and how that is going to allow them to at least bring down their infra costs of running these models. ⁓

and hopefully soften that blow a little bit, even if the cycles are fast. But it will be interesting too to see if cracks are indeed starting to show as we saw from the previous article, then will we start to see any impact to the model timing? Will we hit a point where the pace of advancement of the models might be fast, but they won't be able to productionalize them because it's too expensive?

to keep up with the arms race that's going on and essentially keep operating the loss. things might, well, one option is slow down a little bit and run it for longer. You might be behind, if like people stick around, but again, as you said, no moat. like, what's the incentive to stick with ⁓ opening eye versus a Claude? Yeah. Right. True. Yeah.

Shimin (1:06:54)

Yeah.

Mm-hmm.

or Gemini, right? We don't talk about Gemini a lot, but Gemini is a great model. So,

Dan (1:07:13)

Yeah, I've been using it more and more. ⁓

Shimin (1:07:14)

all that being said, yeah, all that being said, how do we feel about a clock?

Dan (1:07:19)

Well, the other piece of news that we didn't explicitly cover, which is extremely timely, is the whole market tanked today. And that is very ⁓ point in time. But that's being driven supposedly, I mean, who knows, investors being what they are, that's just people. supposedly by a devaluation of the entire software market because of the presence of AI. That's what people are claiming, at least in the news.

⁓ which is a pretty wild statement to put out into the universe, you know, like code has certainly gotten cheap, but the part that I think maybe your average, like, know, institutional investor doesn't understand is that like code is one 10th of the game, you know, it takes a team effort to build a piece of software and to run it and

Code is only a small section of that. yeah, in any case, I think, start of a slide too, you know? So like that in combination with these other pieces makes me tempted to at least shave, I don't know, where are we at right now? Do you remember?

Shimin (1:08:21)

minute 45.

Dan (1:08:21)

I would argue we should probably be in about a minute.

Shimin (1:08:25)

A minute. I was thinking a minute 15. Wow. A minute. All right. If we I'm down for I'm down for a minute. If we are at a minute, then if the market does crash next week, we're going to look we're to look very, very, very intelligent there.

Dan (1:08:28)

We could do that.

And if it doesn't, well, we can always move it back a little bit. Like we do almost everywhere.

Shimin (1:08:43)

This

is why I love this segment, because you can never be wrong. Unless we actually say it's zero.

Dan (1:08:50)

Or you're always wrong and that's part of the fun.

This is why you should never ever trade based on anything we say on this podcast because holy cow is this wild. Yeah.

Shimin (1:08:56)

Yes, this is not a financial podcast.

Well, and with that, I think we have come to the end of our show, folks. Thank you again for joining us this week. If you like the show, if you learn something new, please share the show with a friend. You can also leave us a review on Apple podcast or Spotify. It helps people to discover the show and we really appreciate it. If you have a segment idea or question for us or topic you want us to cover, shoot us an email at humans at edipod.ai.

We really love to hear from you.

And lastly, you can find show notes, transcripts and everything mentioned today at www.adipod.ai. Thank you again for listening.

Dan (1:09:35)

And

we're also accepting suggestions for how to make my wardrobe more dark flow. So don't forget to email us about that one too, or just hit me up on Slack.

Shimin (1:09:43)

⁓

I'm thinking shades indoors and maybe some gloves. know. I'm looking to purchase Fedora. We need to talk offline about that.

Dan (1:09:48)

You

Adora.

All right.

Thanks for sticking with us, folks.

Shimin (1:10:00)

Yeah.

Thank you for listening. We'll catch you next week. Bye.