Pentagon Anthropic Drama, Verified Spec-Driven Development, and Interview with Martin Alderson!

Shimin (00:00)

Dan, I love this. ⁓ OpenAI did just raise $110 billion. Who knows where that money is from and what kind of back channel potentially happened there.

Dan (00:02)

Hahaha.

don't think it's a conspiracy.

Rahul Yadav (00:08)

Yeah.

let me get my conspiracy in there while we're on it There was that whole like too big to fail thing about OpenAI where they had hinted along those lines. If you become a critical part of the government and you're helping it fight wars and do all sorts of other things, you do become too big to fail.

Shimin (00:21)

Mm-hmm.

Rahul Yadav (00:34)

⁓

Dan (00:32)

Nice.

Wait, no, no, no.

What if it keeps going even deeper? Sorry, I have to. Because they realize that if OpenAI goes, that'll be the, like, potentially the pin that, pops the bubble. So the entire drama with Anthropic is manufactured so that they can do this contract to prop them up because they know it props the entire economy. Okay, sorry, I'm done, I

Rahul Yadav (00:46)

Yeah.

Shimin (01:14)

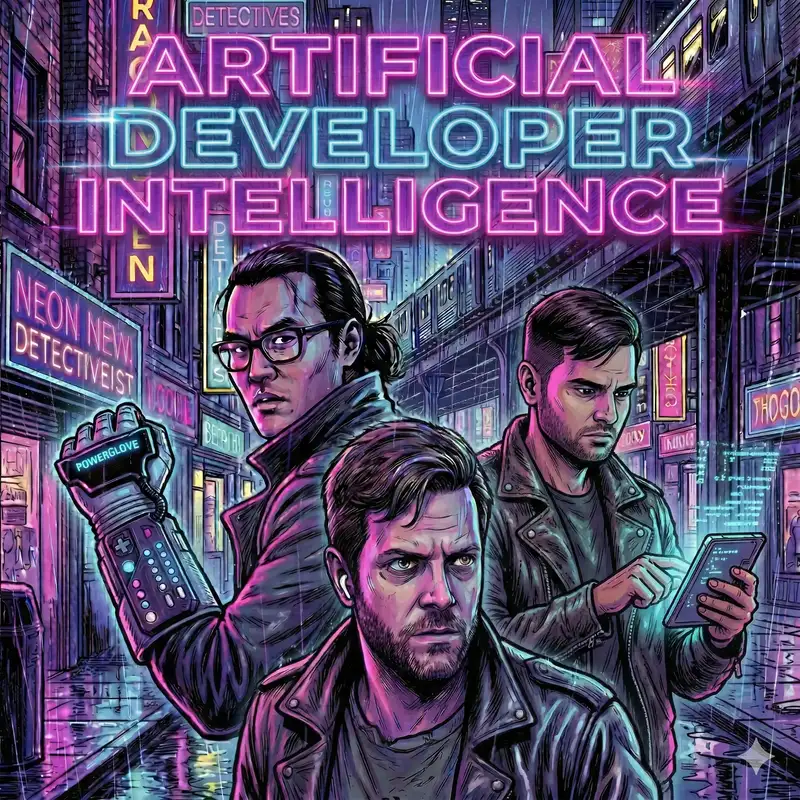

Hello and welcome to Artificial Developer Intelligence,

podcast where three software engineers talk about the impact of AI on programming. I am Shimin Zhang and today with me are my co-hosts. Dan, his operational decisions are up to the government, Lasky and Rahul, he never underestimates the elasticity of human desire, Yadav. How are you two doing today?

Dan (01:38)

doing well. Thanks for asking. You always get me with the cold open. Is that what that's called? I don't know.

Rahul Yadav (01:40)

Hello. How are you doing Shimin?

Shimin (01:47)

Yeah. so.

Dan (01:47)

It makes it hard to

come up with like something else to say because I'm too busy laughing at whatever you come up with.

Shimin (01:53)

then I've done my job well. On today's show, we'll start with the news threadmill as always, where we have the big news item of last week slash this week, the Pentagon drama with the various large language model labs, Anthropic and OpenAI, followed by Sterling 8B

Dan (02:10)

of ease.

Shimin (02:11)

A model that is fully inherently interoperable.

Dan (02:16)

means in a minute. But next, we're going to go into the technique corner where Shimin will be telling us about verified spec driven and development, not just any spec driven development.

Shimin (02:27)

Yeah. And then we're going to move on to post-processing where for the first time we'll have an interview. We'll have an interview with Martin Alderson about his blog post on which web framework is the most token efficient. I'm excited for that.

Dan (02:41)

Yeah. Then last but not least, we'll be talking about the latest crisis in AI funding in our two minutes to midnight section, where as always we determine how close we are to the bubble bursting, not bursting, et cetera.

Shimin (02:55)

All right, and to get us started, let's talk about the Department of Defense slash Department of War drama with the AI Labs Rahul, why don't you get us started on that?

Rahul Yadav (03:07)

So in January, we captured Maduro from Venezuela. And apparently, Claude was used during the operations.

which is a whole like no one's really dug into like, sorry, what was Claude doing in there? I think we need a few episodes and all sorts of news articles dedicated to that. so, um, uh, Palantir uses Claude um, has used it for the past few years. That's why Anthropic is the only,

Dan (03:23)

That was my first reaction when you said that, for sure.

Shimin (03:25)

Thanks.

Dan (03:32)

Claude was on the ground with a custom M4.

Rahul Yadav (03:44)

model company that has its LLM that's being used in defense operations through their partnership with Palantir. They learned about this and were in a regular check-in meeting were asking their Palantir contact about hey, cloud might have been used in this and they were trying to get more information. That apparently freaked out the Palantir person and then

They, because they were likewise Anthropic digging too much into this, they reported it just as a concern up the chain to Pentagon, eventually made it to our department of

Secretary Pete Hegseth. there are like two main red lines. Everybody's heard of this. But in case you haven't, Anthropic has had this in the contract since they signed this back with the Biden administration. Two main red lines. One is that their AI should not be used for mass domestic surveillance of U.S. people. The important thing to note here is if you're outside the country, U.S. citizens, you can still be

just not on US soil. And then no use of autonomous weapon systems because they want to have a human in the loop. models are just not good enough to make the life and death decision and they should not be putting humans in there just yet. They want the humans to have control over that.

The Department of Work did not like that. They're like, you can't tell us how to do our business. want to, once we have access to the technology, we want to run it however we want. ⁓

One of the main things that this article calls out from Zui is

These are not like feature flags or something where you go turn on mass domestic surveillance or turn it off and then same for you know turn on full autonomy go go full terminator mode. These are very much like policy things that are outlined in the contract of like you you shouldn't use Anthropic's models for these things and then obviously like if they do then Anthropic would

Shimin (05:25)

Ha ha ha ha ha ha ha ha ha

Rahul Yadav (05:40)

you know, it would be a breach of contract. And so that's what Anthropic was trying to hold their ground on. Came to a head last Friday, the Anthropic had until Friday, 5pm Eastern to either, you know, give the Department of War full access and get these out of the contract or they would stop using Anthropic and label them two contrary things.

label them a supply chain risk, which means that this is against US interests. These would be things like people say even deep seek, which is a Chinese model affiliated with Chinese military, still not label a supply chain risk, but Anthropic was threatened to be labeled as a supply chain risk, a US company, US folks building the model. At the same time as being labeled supply chain risk, also that the Department of War would invoke the defense production

Act and force them to let them use the model and you would only invoke the Defense Production Act on things that are necessary to you so you cannot have a thing that threatens you and at the same time be necessary to you. Maybe a good thought exercise for the audience if you can think of something like that we would love to hear both a supply chain risk and something that's necessary to our national security.

Dan (06:51)

Although I would argue

that deep seek is not a supply chain risk because they're not in the supply chain. So there's that, but you know.

Rahul Yadav (06:59)

Could be if the contractors end up using them or something. yeah, Anthropic to their credit did not want to go back on this. They wanted to keep the red lines in. They feel strongly about the Fourth Amendment. They do not support mass surveillance of.

US citizens or US persons I should say.

And then obviously human in the loop. The most famous example of having a human in the loop is back in the 70s during Cold War

Stanislav Petrov was working in Russian nuclear-like monitoring station. And Russian ⁓ nuclear monitors are known to be flaky, let's put it mildly. And what had happened was sun rays reflecting off of the clouds somehow on their monitors look like US had just fired five nuclear missiles at them.

Dan (07:34)

launch detection.

Rahul Yadav (07:46)

you what Petrov did and then we'll put AI in its place to like see what human not in the loop looks like. Petrov looked at that and then by protocol he should have reported it up the chain. You reported up the chain, it's bound to escalate and then you know we were very close to ⁓

Dan (08:02)

then it's real two minutes to midnight.

Rahul Yadav (08:04)

Yeah, that's the real two minutes to midnight. We were very close to annihilation. then Petrov correctly reasoned that if the US is going to launch an attack on Russia, they had many more nuclear missiles. They're not going to just fire five and then sit back and see what happens. And so he looked at not just the, yeah, not just at that data, but he also looked at some of the other ground-based radar systems and other things. And they did not

Dan (08:19)

Yeah.

Rahul Yadav (08:30)

support that data. So he looked at all of that and broke protocol. He should have reported it, but he just decided to not report it at all. Later it came up in the reports. He was reprimanded for that and everything because you know, have to, you're the Soviets, you have to like hold the protocol as the supreme thing. But thankfully because of that, we prevented a major nuclear escalation.

replace Petrov with like AI Petrov, which is just looking at certain data, which can easily be faked, right? Even if you don't even have to tell it, this might be real missiles or even reflection from the sun, you can literally figure out a way to feed it fake data. And as long as the model is trained to obey any orders that you give it, there's no way that it would stop and carrying out its orders.

go anywhere from escalation outright to just fire back if you ever see these problems. You've seen Dario desperately calling this out, but sometimes these things hang on like the...

are soldiers who do not want to follow the rules because once in a while you just have some soldiers who are unwilling to carry out the orders of an authoritarian or something and that's what keeps us from you know really escalating things so they just don't feel comfortable with their models ever doing that or their models not doing that right now they think the models would get there one day and they'll be capable of doing this they do see a world where

Shimin (09:49)

Mm-hmm.

Rahul Yadav (09:56)

autonomous warfare is a possibility, but today they're not there and they do not want to concede to that. And then mass surveillance we talked about, they just firmly believe.

It's violation of the fourth, fourth amendment. So all of this happened. Anthropics, they have, as far as I know, still, this is Tuesday, March 3rd, we're recording this on Friday was when they got the label of supply chain risk and all that over.

Twitter where all the politics happens. They have not received an official letter because if you deliver that then you go through like court challenges and everything. So as far as I know they still haven't received anything official is just that the that's what the government would do. Now Friday evening after all this

Shimin (10:33)

Mm-hmm.

Rahul Yadav (10:37)

Sam Altman sends out a tweet saying, we've figured out the same thing that Anthropic just died on that hill and the Department of War is completely fine with, you know, the things that we wanted and we've gotten the same things worked out with them. They published a whole, people were like, this doesn't make any sense. if Open AI would get those concessions, then that agreement, then so should Anthropic. So there's obviously

Shimin (10:41)

⁓

Rahul Yadav (11:02)

like you know Sam is giving his take on what they might have just agreed to not necessarily what's in the contract. So

Open Air put out a statement on what they've signed with the, commenting on what they've signed with the Department of War. It says that it would be used for all lawful use, which is the gray area here, because the lawful use, and there's all sorts of like commentary on this already.

lawful use is very murkily ⁓ defined. Department of War just has its directives. There is no congressional law that keeps it from

Dan (11:30)

Yeah, we don't have much law around our arms yet.

Rahul Yadav (11:41)

doing certain things. a lot of these restrictions, Department of War can within a few minutes decide, here's a new directive, this is lawful now, while it wasn't before. So that's one thing. An example that is in one of the articles from Scott Alexander were different.

people had collected this data and put it in there is let's assume the US is trying to get data on terrorists. And so they tap deep sea cables to try and get data on them.

As a side effect, if they also end up getting data on all of US citizens, lawfully they got the data on the terrorists, but hey, if we end up getting a little bit on the citizens, now they have access to that data. They cannot on purpose search that data for like specific people's name, but if they happen to find something about that person, then it's also part of, so they can use it in different places. So it goes very easily from like you started for some reason and you end up getting data for another reason.

And there's also, we just happened to happen, it happened to the deep sea cables and here we are knocking at your door. And obviously there's another lawful way to just get the data as the government can legally just buy the data from third parties as well.

Dan (12:35)

We just happen to have this database.

Rahul Yadav (12:51)

these are all different definitions of lawful use and all fall under lawful use but OpenAI says that they have worked out a deal that unfortunately, Anthropic couldn't work out but they support Anthropic also, you know, getting the same deal and everybody getting equal treatment from the Department of War. Three specific things. Go ahead. Yeah.

Dan (13:09)

So the other part that I found out about this too is like, I was

waiting for you to mention it, that deal with OpenAI had been started on the previous Wednesday.

Rahul Yadav (13:20)

interesting.

Dan (13:21)

So it was rolling, I think well before some of even like the more like truly serious drama even happened. So part of me wonders to some degree if it's just like a almost like PR capitalization on Sam. You know, Sam is pretty great at that. So it's like he's just like he was already in the works and then like, yeah, well, we'll just, you know, try to fight back a little bit at Anthropic. But anyway, just pure speculation on my part, but I find it.

Shimin (13:25)

Mm-hmm.

Rahul Yadav (13:26)

Yeah.

Hehehehehe

Yeah.

Shimin (13:47)

Conspiracy

Dan, I love this.

⁓ OpenAI did just raise $110 billion. Who knows where that money is from and what kind of back channel potentially happened there. Just all rumors, But I love myself a good conspiracy.

Dan (13:49)

Hahaha.

don't think it's a conspiracy.

Rahul Yadav (13:55)

Yeah.

let me get my conspiracy in there while we're on it and then I'll wrap up with the three things I wanted to comment on. There was that whole like too big to fail thing about OpenAI where they had hinted along those lines. If you become a critical part of the government and you're helping it fight wars and do all sorts of other things, you do become too big to fail.

Shimin (14:15)

Mm-hmm.

Rahul Yadav (14:25)

So I'll just say that and leave it there. On my take on the conspiracy. ⁓

Dan (14:28)

I see where you're going with it. Nice.

Shimin (14:30)

Ha ha ha.

Dan (14:33)

Wait, no, no, no.

What if it keeps going even deeper? Sorry, I have to. Because they realize that if OpenAI goes, that'll be the, like, potentially the pin that, pops the bubble. So the entire drama with Anthropic is manufactured so that they can do this contract to prop them up because they know it props the entire economy. Okay, sorry, I'm done, I promise.

Rahul Yadav (14:45)

Yeah.

So one thing that is uniquely positive in my opinion in Anthropics contract is also explicitly calls out like it shouldn't be used for social credit systems and things like that, which I do think is worth calling out. But again,

Once you say lawful use and the definition of lawful use can change, you cannot, it would be one of those things where you can forever try and be like, what about this thing? What about this thing? You cannot really imagine all the different ways things could be used. But an attempt was made is what I took away from that.

The other two things that they call out on why their agreement is even more restrictive than Anthropic. First, they say it is cloud only and no edge deployment, which I didn't feel convinced about at all. You can run AI in the cloud and can totally still be autonomous. So I don't think it's not in the edge, so it cannot be completely autonomous. Just didn't stand up to scrutiny

And the other thing they said was if they want to, they'll have open AI personnel in the loop to monitor usage of these things. But let's be realistic. Any open AI personnel or anyone else would be a contractor to the government. And maybe I'm getting my definitions wrong. They won't be a government employee as far as I know. And so in high stakes decisions, they don't get to have a say on what the department of war.

So it almost seemed like a nice to have, but like when the rubber meets the road, it didn't really seem realistic to me that having an open AI employee there is really going to make a difference in what decision the government is going to make.

Dan (16:28)

I mean, I don't necessarily want to take open AI side in this, like, or really any sides per se, but like the one thing that I find odd is that the discourse is kind of talking about this in terms of as if you're going to somehow glue a large language model into a like tactical scenario and have that have a meaningful impact in it. And I'm sitting here thinking about it, maybe it's my own bias showing, but I think that's a

pretty poor thing to do with it. The thing I would use it for would be like processing like the massive amount of unstructured data that intelligence gathering operations generate and structuring it in a way that's queryable to be useful. when they're saying they're using it in these operations, that's kind of what I figure is they're taking radio intercepts and stuff that and jamming it all together. And then maybe also using agentic querying systems to help you like filter through this crap.

easier in the same way that you and I might use it to filter through like, know, Grafana logs or something like that, you know.

Rahul Yadav (17:21)

Yeah.

Shimin (17:22)

But that does sound like exactly the use case for finding out where the dissidents in your society are.

Dan (17:28)

yeah, I'm

not disputing the mass surveillance one at all. think that's like a definitely, especially the dragnet effect, like the regarding like the autonomous thing, I'm just kind of like.

are they gonna put you know, 120B on a raspberry pie and like stick that on the end of a missile or something? It feels there's more efficient ways of doing that, but what do I know? And you'd really want a world bottle, not a Lex language model.

Rahul Yadav (17:47)

There was a whole

Someone had a photo of a Starshield antenna coming out of a drone. It was circulating around recently. we're not too far away then. We're, yeah. Yeah. Yeah, I'm not saying they're the same things as like running a model, but we can keep pushing it closer and closer. And again, like.

Dan (17:56)

Yeah.

I mean, certainly Ukraine was using it for remote control, right, as they're Starlink on.

Yeah, I mean, obviously there

is a future where that's today. Well, already happening and probably has already happened with like specialized models, you know, at least in research environment.

Shimin (18:22)

it's definitely happening today. There are two additional developments from earlier today that I don't know if you are caught up on yet, but at some point earlier today, Sam Altman mentioned something about they've decided to hold on with the negotiation with the Department of War in order to refine the terms around the autonomous weapon and domestic surveillance. But then

Two hours later, Sam Altman had an OpenAI staff meeting saying that the operational decisions should all be left to the government as part of the contract. So there has been kind of a mass migration off of ChatGPT at least online, over the weekend. And there are a lot of support for Anthropic. There were some top tier memes about

Rahul Yadav (19:02)

Yup. ⁓

Shimin (19:10)

this is Claude. Claude is your friend, Like old timey World War II poster style memes that I probably spent too much time scrolling over the weekend. And so it seems like Sam has said clarifying or maybe contradictory things multiple times. At least from my vintage point. He's

flip-flopping a bit, which as we know is not Sam Altman's usual MOD at all.

Rahul Yadav (19:37)

I, yeah, I, you know, I think all the, the, the, employees at OpenAI and all the labs as far as I can tell are very passionate and outspoken about their work. And I think from what I can tell, he's probably getting a lot of questions and pushback and all that, which is

Dan (19:37)

Stay.

Shimin (19:41)

You

Rahul Yadav (19:56)

reconsider these things.

Shimin (19:58)

Yeah, as much as I kind of do see, you know, opening AI point of view, if you're a company operating in a country, you probably shouldn't have too much power over how the government decides to use your tool. I do see that point of view. But on the other hand, you know, if we recall

Rahul Yadav (20:12)

Yep.

Shimin (20:16)

why Anthropic was formed in the first place. It was after OpenAI had this ownership drama and no longer was a public benefits corporation or no longer is open by some definition of open. And some of the folks who are very much disagreeing with that left and founded Anthropic.

You probably know where my cards are in this game. I... Let's just... Yeah. Well, I had a free month of chat GPT subscription that they were giving out And let's just say I didn't renew it over the weekend.

Dan (20:37)

We know where your subscriptions are. I don't know about your cards, but...

Rahul Yadav (20:48)

Let me add one other thing to this before we move on. The most positive take I saw in all of this was Ramis Nam, I think their name was. I'm not sure what this person does or anything. I just remember the name. They were saying like.

Dan (20:50)

You guys are gonna make me be the open AI person, aren't you?

Shimin (20:53)

You drew the short straw.

Rahul Yadav (21:07)

This has brought it to the public's attention in a very, these are serious things and hopefully Congress will take action because all of this comes down to Congress needs to pass some laws, take more action here and Congress moves very slow.

painfully so and hopefully this really triggers them into action and is able to get bipartisan support because I think both sides agree, especially on the mass surveillance piece at least. So yeah, hopefully that's something good we get out of this.

Shimin (21:36)

All right, we're gonna talk about this all day, but let's keep on following the drama. Yes, let's do that.

Dan (21:40)

Talk about open weight models. My favorite

topic.

Steerling 8B is actually is it even open weight? I don't know. I ⁓ sort of put it in there because it's my usual shtick about open weight stuff. But the reason that really made the news list for this episode was because it has an interesting feature that I've not seen in any other model, even Frontier Lab stuff to date. So it's an 8B model. There's nothing super exciting about that other than they claim that it was trained

Shimin (21:48)

It is.

Dan (22:10)

much cheaper computationally than its sibling 8B models. So you can compare it to other open-weight stuff. Like guess the Llama 3.1 is probably the most famous in that size, but there's newer ones like the smaller Qwen 3 models that are folks are still running them because you can run them in CPU on like an everyday laptop that you can pick up and still kind of do some cool stuff with it local. So anyway.

The really fascinating thing about this is it is what they are calling, let me actually quote it, the first inherently interpretable language model. And what they mean by that is it is a model that can trace any token it generates to its input context, meaning what you typed into it. So they have a cool little demo thing on their website where basically

they have a prompt and you can click on chunks of the prompt and then it'll show you how it weighted the input features in the ML sense to try to understand how that impacted the output. And then there's a couple other things where you can look through the concept attribution where what concepts was it interested in when it generated that response and then.

Finally, and this the one that I find most fascinating, is the have training data attribution. So you can actually, in theory at least, link it back to broadly where the information it used came from, which is something that I feel like, I mean, the number of times that I tell a FrontierLab LLM, cite your sources, because I want to make sure you didn't just make this up and you actually used a fetch tool or something to pull it in, right?

is quite high. I find that very exciting because it could be pretty explosive in terms of if this were to make it into frontier models where you can like really understand where it is and could also be interesting from an intellectual property standpoint too, right? you know, authors and other folks are claiming that their stuff has been, you know, likely has been actually trained on and now you could prove it with something like this, which would be kind of cool or prove that it was used in a given output.

So the way it works internally is a little beyond me, but I can take a swing at it unless you want to, Shimin.

Shimin (24:15)

I,

I did a slightly deeper dive. didn't see where they, talked about the training data attribution piece. I don't know if you looked into that, but I mostly focused on the concept attribution.

Dan (24:27)

It was, it was one of the, like, I

don't really understand the, if it's, it's sort of like mixture of experts, I think is how I understood it to work where essentially there's like a router layer and that layer is used to create the attribution vector that is pointing at which section it came from. And there's three sections, which is,

Shimin (24:37)

Mmm.

Dan (24:47)

the known concepts, which is like basically stuff that it was fed, discovered concepts, which is stuff that it sort of like came up with as it was being trained. And then residual, which is things like, okay, whatever, we'll just throw it in this bucket because we don't know what it falls under.

So maybe you can explain the map to me at some point.

Shimin (25:04)

Yeah, I actually

think that was a part of their concept attribution. what they did. Yes. So, which like is really interesting because if you have, you have, you have the vector of the concept that the output is generated from, then you can manipulate the concepts directly. in one example here, they,

Dan (25:10)

just that piece was just constant. Interesting. Okay.

Shimin (25:26)

suppress concepts about fake information and misinformation generation and deception. And you can use that suppression to prevent the model from telling the end user how to spread misinformation about election results.

Dan (25:38)

Yeah, without retraining

or fine tunes or anything like that. It's just like the vector level. Yeah.

Shimin (25:43)

Exactly.

And what they did was, and this is kind of a, they have a pretty cool diagram of how they did this on their blog. They took a regular trained large language model. then before it goes from the output back into, you know, tokens logics for the tokens for next token prediction, they stuck a head, which is a new vector, right in front of the output.

And inside that head, they did additional training where some sections of the head was already mapped to concepts that they decided ahead of time. That's the part about the 30 and known concepts and known concepts. And then there are some residual and then some portion of the head are concepts that they pre-programmed in. And then the rest of that head were concepts.

that they train the model to learn via manipulation of the loss function. So one of the things they did was they trained the loss function to be as perpendicular as possible in this concept head. So each concept should have nothing to do with each other.

Dan (26:51)

I just got slow, but I got it.

Shimin (26:53)

Yeah. And then the other loss function they had was.

Dan (26:58)

is when news thread mill turns into a deep dive. We got Shimin interested in the math and you can't stop the man.

Shimin (27:01)

Yes.

I just briefly went

over it. And then the next one was concept presence loss. So they try to make sure that some number of concepts were indeed included as a part of the output. then whatever that cannot be explained by those concept activations then becomes a residual. So they also try to minimize residual construction loss based on that. Really clever approach requires additional training, but

I can't deny the usefulness of this, this degree of instead of trying to find out, know, which neuron is the golden gate neuron, it forces the model to instead be trained on these non-overlapping concepts. So then you can just manipulate that row of vector at the very end, as opposed to trying to go look for those concepts, you know, in a, in a giant ocean of weight matrices. ⁓

Dan (27:51)

Okay.

I actually

didn't realize that. So thanks. That was super useful. ⁓

Shimin (27:58)

Yeah, so it's a really

cool approach. They are pretty new, right? By guide labs.ai. I didn't find too much information about them. You could join the wait list, but excited to read more about, yeah, where they go, where they get the source attributions from, especially. Yeah, cause I didn't see anything. But yeah, very cool model. Listeners try it out.

Dan (28:03)

Mm-hmm.

where they go with this. Yeah, it's fascinating.

Shimin (28:20)

Rahul, do you have anything to-

Rahul Yadav (28:21)

I was trying to read through like, how did they achieve the interpretability?

And then the bigger curiosity I have is can you achieve interpretability even if you have a much larger model? Because at some point this becomes like a you have tons of concepts and at some point like can you scale it or not?

Shimin (28:42)

Yeah, that's the open question, right? Like if you make that concept back there larger and larger and larger, would, would the concept be, you know, continue to scale. this, this was their paper on, they have a paper on scaling, interpretable language models to a billion parameters. So they probably started with like a toy model and then got it to 8B and they're probably gonna try and take it to like 800 B, but we'll see. Yeah. Rooting for them. Okay.

Dan (28:48)

It continued to scale, yeah.

Rahul Yadav (29:02)

Yeah, yeah. Nice, yeah.

Dan (29:05)

gonna be

a lot of compute.

Shimin (29:09)

On to technique corner. I wanted to introduce verified spec driven development by DollSpace-Gay on GitHub. Doll is great. Follow it on BlueSky. Doll's got hot takes about AI driven programming. Anyways, so.

Dan (29:27)

This is also, I think,

the first time we've done a section of the podcast on a gist before. So GitHub gist, here we go.

Shimin (29:35)

Yes,

It is a GitHub Jest, but it is essentially a markdown documentation of a particular workflow. And the idea is to combine some of the stuff that we already use and know every day, like spec-driven development and test-driven development. But it doll adds this verification-driven development as a part of the workflow. So the idea

Dan (29:43)

I was teasing.

Shimin (29:56)

is to always have an adversary that reviews the work, both the spec and the output to essentially come up with a critique and only accept the code if everything that the adversary come up with have been fully satisfied. So it's a bit of a convergence test. So in this tool chain, you have the architect, which is us, the human developer.

The builder, is your AI coding agent like Claude. The tracker, doll has created a chain link that's very similar to Beads where tickets are beads So you do want to have a tool for tracking your tickets. And lastly, you have the adversary, which is the critique generation that we talked about just a second ago. And the pipeline goes through different phases.

Phase one is spec crystallization, you usually have the builder create a spec and then verify it via, verify the architecture via, know, provable properties, catalog, purity boundary maps, verification tool links and property specifications. So it is a little more in depth than the...

than the average kind of spec driven development spec. And then you have a spec review gate where the adversary conducts critiques on the existing spec. And then you iterate until the adversary is satisfied. And then you go into the test first development, the TDD part where you do red green refactor. So it's red green testing, but there is a refactor part where after the test is completed and the code is working, you hit the adversary to be like, hey,

This is missing. This is not covered by the spec. And you send the builder back to it, refactor the code until it's satisfied. That's kind of the main heart of this is like do spec driven development using TDD, but have an adversary at each phase that critiques the artifact. And if it's not satisfied, either go back to refactor or go back to

the specification portion. I have to say I did try it with a side project, over, over the weekend, where I didn't use like an N8N or something like that to, create a workflow, but I basically asked, Claude code to generate a skill for VSDD and then apply the skill as it was work, as it was developing, the side project.

I do think that the verification, gate is helpful in the final project, which I am looking forward to sharing with the world, is, it had had fewer bugs than it otherwise would if I just had gone through like, Hey, my regular plan and development phase. there were still bugs, but it was better for sure.

Dan (32:34)

Did you use Claude as the adversary? Just because it's all Claude's skill?

Shimin (32:38)

Yes. I think 80 % of time it did just kick off a Opus agent for the verification gate, but some 20-25 % of time it actually used Sonnet as the adversary with the justification that the methodology recommends using a different model as a verification. it was smart enough to kick off Sonnet to do that.

Yeah. I think this methodology, you know, is an evolution of all the things that we're doing already versus the things that we're doing, but haven't formalized, right? Stuff like, refinement and convergence via, agents. That's something that I've done both at work and also on my own side projects. So it's, good to see it kind of incorporated nicely in a

in a single pipeline. Looking forward to see where doll goes with this.

Dan (33:31)

be the next Gastown

Shimin (33:33)

it could be the next gas Gastown

Dan (33:34)

I just wanted to say Gastown I'm not lying.

Shimin (33:37)

Well, at least the various agents have much more normal names than polecats and Deacon and dogs.

Dan (33:43)

I hope we don't get rid of that part, you know? I just, I don't know.

Shimin (33:47)

Okay. Next up we have our post-processing where for the first time we have an interview with the author of a blog post, Martin Alderson. So with the power of technology, we're going to go to our interview.

Shimin (34:04)

And for this week's post-processing, it's my pleasure to have Martin Alderson join us. He's a Hacker News blogger all-star. He's co-founder of Catch Metrics and an all-around cool guy. He blogs about the intersection of software engineering and economics with a focus on AI transformation at martinalderson.com. Hi, Martin. Thank you for joining us.

Martin Alderson (34:28)

Hey, thanks so much for having me. Really excited to talk to you.

Shimin (34:30)

Yeah, so you ran an experiment the other week about which web frameworks are the most token efficient for AI agents where you created the same blogging platform using 19 different frameworks anywhere from ASP.net to I think Phoenix was the most complicated one. What was the most surprising thing about

your experiment to you, the results of the experiment.

Martin Alderson (34:55)

Yeah, so I thought that the initial... in the article, I go through it in a bit more detail, but I did it. So the first build was just scattling a blog out, the classic Hello World kind of application for a web framework. What I thought was really interesting was that, unsurprisingly, the most token efficient ones were sort of minimal framework. So Flask, Express, first bigger frameworks like Rails or Next.js.

I suspected though that there was going to be much more token efficiency on subsequent features. So in the blog I detailed adding a new feature, adding sort of tags or categories to each of them in the same Cloud Code session. And there wasn't really much efficiency gained on the subsequent one. So basically the minimal frameworks were a lot more efficient overall looking at it sort of end to end. They were quicker and more efficient in number of tool calls and token usage.

Shimin (35:25)

Mm-hmm.

Martin Alderson (35:44)

building the initial application but then also a subsequent follow-on there wasn't a huge amount of difference between them.

Dan (35:49)

And you mostly use Claude for all these, right?

Martin Alderson (35:52)

Yeah, I use thought code with Opus 4.6, yeah.

Dan (35:55)

Gotcha. Do you know if it like read docs too? That was the other thing I was wondering is like how much of it is like the overall documentation for minimal frameworks is gonna be lighter just by definition.

Martin Alderson (36:06)

Yeah, so I think I did put the prompt I used. didn't do anything past that. I didn't drop any docs in or anything. So yeah, I think it was mostly going on its memory rather than reading loads of docs, but it did have a, did, some of the frameworks had real trouble with just the amount of sort of scaffolding output it did. And it just didn't know enough about each framework, I suspect, to sort of like do that efficiently.

Dan (36:11)

Yeah, I didn't tell it to look for it. Yeah.

to.

Shimin (36:31)

Yeah.

Martin, one thing we kind of have discussed a couple of times on this podcast is like, what would the future of new tooling look like? Right. Like with the way folks are relying on AI agents to write their code, how would, let's say I create the new best framework tomorrow. Like what would that adoption curve look like if the, my new framework is not going to be a part of training data of an AI agent?

Martin Alderson (36:58)

Yeah, I think it's a good point. One thing I would say though, which surprised me is that nearly all of the frameworks did work. So it wasn't that some just didn't work. I think if I'd run this six months ago, I'd be surprised if even a handful worked with no interaction whatsoever. we shouldn't read too much into the token efficiency. think the actual bigger point is that even very esoteric frameworks that

Shimin (37:14)

Mm-hmm.

Martin Alderson (37:23)

I don't really have much experience in at all, did manage to build a workable blog. They all look pretty good. I mean, they weren't jaw dropping design, but they all were pretty interesting in terms of like they were styled quite nicely. They all made sense. There wasn't any bugs I could see in a very quick evaluation of them. So I think from my perspective, was looking at that. It's not that like...

Shimin (37:31)

Hahaha

Martin Alderson (37:47)

Some frameworks just can't be used. I think some are more efficient just because they have less files involved in them.

Shimin (37:53)

Yeah, our discussion has been around like how does my new mousetrap framework become popular if, if no one knows about it, you know, like.

Dan (37:58)

Yeah, that's a good question. And

the LLMs are all trained on, you know, whatever was popular during their training set. That's fair.

Shimin (38:03)

Yeah.

Martin Alderson (38:06)

Yeah, no, think that's a very fair point. So think there's two separate questions there is I think, firstly, I sort of said that any decent framework that works if prompted, I'm sure the agent can figure out how to use it. I think there's a separate question there though about unprompted, how do new frameworks get discovered? So if you release a new framework, how do people know about that? And I think that's a very interesting question. I think we're really at the start of

how do agents discover new libraries, new functionality? It almost feels to me there needs to be some sort of app store, not quite the same metaphor, but some sort of marketplace or some way that this can be discovered. And I think what we'll actually see is that you used to have these like curl bash one liners to set up an environment and say rails or whatnot. You run this, it'll set up all the stuff. think...

Shimin (38:54)

Right.

Martin Alderson (38:55)

We're already starting to see it, but I'm thinking if you had this very new cool framework, it would say, drop this into your new project or migration project, use whatever framework you're coming up with, Dan's framework or Martin's framework, grab the docs from here. Yeah, we'll get a better name for it, for sure. Grab the docs from this URL and start implementing it there. I think that's potentially one way, or do we just end it with this?

Dan (39:08)

Don't use mine, it's bad.

Martin Alderson (39:20)

quite bad monoculture of just everything using Next.js and Python out of the box because that's what it seems to use the most. And the final thing I'll say on that, I do think the harnesses and the Frontier Labs do have, there is an interest in them maybe doing some prompting of their own there in the system prompt, because if there is a framework which is far more efficient and works a lot better, you may find that they, I don't know if they do this now in some sort of fine training or fine tuning or post-training.

Shimin (39:24)

Mm-hmm.

Yeah.

Martin Alderson (39:46)

thing, okay, use these frameworks because we know that you're going to get the end customers going to get better results with them if not prompted otherwise. So yeah, they've got a lot of power there as well, I think.

Dan (39:55)

Yeah, that's true. also like depending on the context, like for example, in cloud code, they would benefit heavily from doing that because if it's more token efficient, it's less, you know, quote unquote free runs that they're doing on the backend for you.

Martin Alderson (40:09)

Exactly.

No, that's exactly what I mean. Like if they're getting annihilated by token usage, which I think we've all seen this week, last weekend with, with Claude, know, you've got Boris tweeting endlessly that we're struggling to scale up. Then they do have a lot of incentive to make it more efficient, especially on the monthly plans.

Dan (40:15)

Right.

Shimin (40:24)

Or maybe once the capacity is built out, have incentive to bloat the inference budget because the margin is highest on the inferencing side of things. So we don't know where the incentives lie.

Dan (40:34)

So it'll actually be

Martin Alderson (40:34)

Yeah, absolutely.

Exactly. There is an argument the other way around there as well. Yeah, for sure.

Dan (40:35)

the least efficient.

Shimin (40:39)

I got another question for you. This is from Rahul, our third co-host who isn't able to make it today. he, you know, he's a subscriber to your blog. He's a big fan. And he mentioned that you posited a very important question about the maintenance of abandoned software. He's curious if you have any thoughts about...

Martin Alderson (40:57)

Mm-hmm.

Shimin (41:00)

you know, what the solution to that would be. Would it be cyber insurance, people who specialize in pen testing and securing outdated things that no one will fix, and maybe, you know, providing quarantine services or something like that.

Martin Alderson (41:11)

Yeah, I think it's a really big problem. there's been a lot of discourse, well, to back up. The models are getting so good at finding security vulnerabilities. They've always been surprisingly good at that. I don't think people have pushed them very far. And I think there's been a bit of a backlash from the security slash tech community that there's been so many AI slop bug bounties put in that I think people have maybe underestimated how good they are with someone that knows

Dan (41:34)

Yeah, like curl specifically.

Martin Alderson (41:36)

knows what they're doing exactly. But yeah, so I think we're going to see a lot more vulnerabilities. And I think it's sort of fine, the mental model that a lot of people have of, okay, there's going to be offensive use of these LLMs and harnesses to find security flaws. But that's fine, because they could also be applied for defense as well. The problem which I sort of wrote on a recent article is that

There's so much software that's abandoned and it hasn't got any defensive capabilities. So I think that's a real big gap in that mental model because you simply can't patch abandoned five, 10 year old CMSs or whatever kind of software you've got there. So yeah, I think it's to be a really, really big problem. I think it's going to happen very, very quickly. I think it's going to not happen at all until it happens. I think I saw one article just yesterday actually.

showing someone is doing this at scale on GitHub Action like vulnerabilities. I think it's a nice use of it, but not nice use of it. yeah, the only thing I can think of is that ISPs or telcos just start quarantining a lot more servers. Like that's the only way that you can sort of look at it is that we move into sort of a permission model where if you want a publicly routable IP address for your server, you sort of have to prove your

Secure enough rather now, is very much you get the IP until maybe you get blacklisted for some very nefarious uses.

Dan (42:53)

Yeah, once it's been completely owned and yeah, that's fair.

Martin Alderson (42:56)

Yeah, exactly.

Yeah. So I think we might start seeing proactive, you're getting null routed, your IP is just taken off, you know, by AWS or even by your residential Comcast or whatever ISP you're using.

Dan (43:09)

Yeah, that's going to be interesting too to see the intersection of that and like routers, right? You know, like especially home, home, because like there's already been quite a few problems around like botnets being run out of just like, you know, unsuspecting people's like hardware that's just quietly sitting there in the credential or whatever.

Martin Alderson (43:14)

Yeah.

Yeah, that's exactly what I'm worried about.

All the giant DDoS attacks that you see are usually from IoT devices that have got pwned in various ways. The thing is, a lot of these run quite crappy upstream internet connections. They're not VPSs with 1 to 10 gigabit uplink with very good peering. I think that's a little

start of what may happen.

which is quite terrifying really, I think the implications of when you move that forward.

Shimin (43:52)

You

Yeah, you laughed. was like, that's quite dystopian. ⁓

Dan (43:58)

The laugh

of sadness. Like, oh gosh, here it comes.

Shimin (44:00)

⁓ Yes, it's the dog

where, you know, everything's okay. So you're the co-founder of Catchmetrics, Are you willing to speak a little bit about how ya'll are leveraging AI in your day-to-day work?

Martin Alderson (44:13)

Yeah, so I think that it's been completely transformative transformation. It's been a complete transformation in terms of how I work really. So I think a lot of my blogs come out of what I'm actually doing day to day with agents. I think we're far further ahead than I expected we'd be so quickly. And yeah, I think just everything's been changed by it. I I recently wrote another article about using open code for pull requests. So instead of having to use a SaaS provider,

using open code to run on your CI-CD pipelines to find issues. That's been awesome. And I think there's going to be, you know, we're looking at using that even more and more in terms of our DevOps and CI-CD pipeline. Can we just get tickets when we make a ticket on JIRA or GitHub issues? Can we just get it to make a prototype of that first, the engineer then reviews and then another agent then reviews the code of. it's sort of hurting my head how much, how quickly it's changing, how like

as a sort of engineering manager type, how to manage the transformation there. But we're seeing like really awesome productivity increases. like, I think everything I've been touching with agents recently is like always surprising to the upside. I've never really done something and went, that didn't really work out. Like I wanted to, the results were pretty bad. If anything, it's sort of the opposite. It's like, how can a simple two paragraph prompt end up doing such impressive code review that would take me hours to probably do into such detail?

I'm not saying it's perfect, but yeah, I think the upside is very much there, if you know what mean.

Dan (45:34)

Yeah.

Yeah. And humans make mistakes too. feel like that's something that doesn't get talked about very much in the like AI versus, you know, yeah. Yeah.

Shimin (45:36)

Yeah, and.

Martin Alderson (45:41)

Exactly. yeah, so many times I've reviewed code, I've missed absolute clangers, right? So it's sort

of showing me up as well in terms of how detailed it can do it so quickly.

Dan (45:50)

It's like, know, especially there's like the sizing thing that I like to harp on so much about like, you know, the 100 line PR gets reviewed like intensely, you know, by everyone's looking at it, but the 100 file one, just like, yeah, it looks good to me. Ship it.

Shimin (45:51)

Yeah.

Martin Alderson (46:03)

Exactly.

Yeah. And I think that's what's really interesting about it where I write the articles, but the PRs are getting bigger and more frequent. It's just very difficult to review that level of code that's coming in across all the sort of projects I work on and overlook. So that's why I started doing it. I did look at a lot of the SAS tools, but as I put in the article, I'm just not happy giving repo admin access to a lot of SAS tools, especially for anything that's got some confidential stuff in.

Dan (46:29)

Yes.

Martin Alderson (46:31)

We're already using open code and Claude code, so I don't see it's a problem to use that in different ways as well. But yeah, I think in terms of what we're building in Catch Metrics, we're working on a really interesting new agentic web performance agent. So I'm hopefully going to be launching that. We're testing with some clients at the moment, but hopefully going to be launching that sometime next month or two.

Dan (46:53)

Nice. That's cool to see the sort of agentic stuff leaking out into products slowly.

Shimin (46:54)

It's very exciting.

Martin Alderson (46:58)

Exactly. Yeah,

you can see why that happened.

Dan (47:02)

Yeah, it's like we're already using it in all these other areas, so why not there?

Martin Alderson (47:06)

Exactly, exactly.

Dan (47:07)

Cool. Can I ask you a couple questions about your actual stack? What do you use day to day? it mostly Claude? Are there any plugins or particular? We do have the tooling segment of our podcast if you listen to it. Anything you'd recommend. I'm a big fan of beads personally, so I'm always pushing that on people.

self-requirements like that that you'd like to.

Martin Alderson (47:30)

No, mean, really,

yeah, really, it's just been Claude code in terms of my day-to-day stuff. I've been using open code for sort of headless like tasks, but in terms of what I've used day-to-day, I've got so used to Claude code and the permissions model. When I switched even to Codex, which has got, has came on loads, I don't know, it just doesn't quite gel right. So there's definitely already some muscle memory in terms of how these things work. I think the one thing that I...

Dan (47:44)

Mm-hmm.

Martin Alderson (47:55)

haven't got working completely, due to Cloud Code's language server protocols, I'm super excited to see more static analysis come into it because a lot of the repetition and nonsense it can get into, I think, gets solved with better static analysis on the code. That has been somewhat broken for quite some time. If you've been following the GitHub issues on the Cloud Code repo. I hope that that gets more stable because I think there's a lot of potential there.

Honestly, I've probably kept it quite simple. I keep on trying other things. I've tried various UIs. I really enjoyed using Conductor on Mac, but it's not on Linux, which is sort of GUI for running multiple at once. I thought that was really polished and really nice, but yeah, they don't have a Linux version. So sort of outlook there.

Dan (48:40)

That drives my choice of like get UIs too, unfortunately. I need something that's going to look the same on every platform because I use everything. So that's cool. Thanks for sharing on that.

Martin Alderson (48:44)

Yeah, exactly. ⁓

Yeah. So yeah, yeah, I

think the one thing I do really recommend people do is just have like a schedule task in that goes through your repo and updates your Claude.md files or agents.md files. So looks at the recent commits and just flags if anything is sort of out of date or not matching what your current state is if the Claude.md or agents.md file and just opens a PR.

I found that super, super helpful in terms of like keeping that up to date because it's very, very easy for it to sort of drift out of sync. And that's where a lot of issues start coming. So yeah, I feel like I should be using more cool, wonderful stuff, but I'm just sort of finding just using Claude code with minimal skills and minimal plugins works the best.

Dan (49:32)

I mean...

I mean sometimes that is the case right because if you load the thing up with like hundreds of MCP servers It's like you've got like half your tokens are already used,

Martin Alderson (49:46)

Yeah, no,

totally. The one thing I have done though, instead of using skills as much as I've built a lot of CLIs for just internal use. for analytics, for customer support, for email, I can have Claude Code reach out to these very simple CLIs. They're just wrappers around APIs. And I find it's really amazing having, for example, a support email, but getting a draft of it written up.

Dan (49:54)

Mm-hmm.

Shimin (49:58)

Mm.

Martin Alderson (50:12)

with the context of all the source code of the project I'm working on. So that's something I'd really, really recommend doing if you're a bit like me and having to do not pure code all the time is start bringing a lot of your other services like JIRA, like GitHub into Claude Code with your own CLIs. They literally take two minutes to make, I find, if you drop the API docs in. And because it's got access to your source code, it can just be really amazing at figuring out issues. So that's one thing I think I'm...

hopefully pushing the boundary on, but in terms of all the plugins and skills stuff, haven't really got that much value out of them yet.

Dan (50:44)

So you're not using Gastown 24 seven like Shimin is

Martin Alderson (50:47)

I'm not. did try the

new... I feel very small when I see that kind of stuff. I did try the new Claude Codes Team Swarm functionality, which was awesome. But I found that it often just not much of software development was parallelizable It was constantly getting blocked on other things. So was just adding a lot of the agentic coordination overhead.

Shimin (50:49)

Hahaha

Dan (50:55)

I've never used to do this.

Shimin (51:01)

Hehe.

Dan (51:11)

Overhead, yeah.

Martin Alderson (51:12)

Yeah, so turns

out a lot of software engineering isn't actually very well suited for parallel agent stuff, I find. And then even if you do use parallel, which I'm sure everyone else has, you've got multiple Claude codes working or whatever agent harness you prefer in Git work trees. I often find you just end up quite a lot of merge conflicts, especially database migration problems that end up with you just...

probably taking longer than just doing it one or two at a time. Maybe I'm not galaxy-brained enough, I really think keeping it simple and really your agents.md, Claude.md file, and giving it access to databases and APIs that you're using in your organization seems to work the best without thinking you're making loads of progress and then spending a lot of time after resolving a load of conflicts and coordination problems.

Dan (51:42)

You

Shimin (51:55)

Mm-hmm.

Dan (52:02)

Yeah, totally. Just big company simulator being run with AI.

Shimin (52:02)

Yeah, it's been my experience as well. ⁓ I know we are almost out of time. Yeah. ⁓ But

Martin, one last question for you. We, know, kind of our listenership is mostly working software devs who are somewhere on the spectrum of the adoption of the agent-based software development paradigm. Like what would be your number one advice for someone who maybe uses Claude daily, but isn't doing

full on kind of agent only software development.

Martin Alderson (52:34)

Yeah, I think just sort of being willing to like test it loads. So like, think that the difference between, for example, Opus 4.1 and Opus 4.5 was so enormous in hindsight, like, yeah. And I think a lot of the hype is miss, there's a lot of hype and there's a lot of, you know, pros and cons to everything and detractors and people that are really, really on board the sort of hype train. But I think just constantly like checking in like,

Dan (52:44)

just staggering.

Martin Alderson (52:58)

I've always really used Cloud Code as the agent of choice. I have checked in on Codex, which I was super unimpressed with not so long ago. And now I'm like, okay, this is actually pretty good. I'm not quite used to your permissions model, but I think being willing to check in on the new trends, especially the Cloud Code, the Codex from the Frontier Labs quite regularly, especially when new models come out, is the key thing that think gets you into it more and then giving it bit of trust.

The other thing I'd say is do some greenfield side project work of your own. I think it's quite scary letting an agent work on a giant corporate code base. Just build a blog with it and see how far you can get. I think it takes a little bit of time to understand how to prompt it and how to get the most out of it. I think that's my key point is just keep on checking in with what's new because the benchmarks don't tell the full story. I think I get a real post about this, but...

Dan (53:32)

production code base. Yeah.

Martin Alderson (53:51)

A lot the benchmarks look very small changes. Like the leap from Sonnet 4.5 or Opus 4.1 to Opus 4.5 was like big, but like not huge when you look at it. I think a lot of us tech people are used to looking at like, know, CPU benchmarks or GPU benchmarks where you want to see a hundred percent increase, not a, yeah, not, not a 50 Elo point score improvement, but,

Dan (54:09)

20 % improvement. Yeah.

Martin Alderson (54:15)

There's a lot of stuff that goes on behind the background, which doesn't get captured in these simple benchmark numbers. So I think, just keep checking in with them all.

Shimin (54:21)

Great, yeah.

Thank you Martin for joining us and once again you can find Martin's work at martinalderson.com and subscribe to his newsletter, or the rest of us do. So be like one of the cool guys.

Dan (54:33)

You

Martin Alderson (54:33)

Great.

Exactly. Thanks so much, guys. I really appreciate your time.

Dan (54:37)

That works. Thank you.

Shimin (54:38)

Thank you. This was super fun.

Shimin (54:39)

And we're back! Guys, that was a great interview!

Dan (54:43)

It was.

Rahul Yadav (54:44)

What did you guys do to me in that interview? You cut me out?

Dan (54:48)

so do you see this door behind me? For those of you listening, there's a partially opened, very mysterious closet door, right behind me. And we may or may not have kidnapped Raul and tied him up in there.

Shimin (54:49)

Mmm.

Rahul Yadav (54:51)

Yeah, I was in there.

You

Shimin (55:03)

All right. I think I'm

So why don't we get to two minutes to midnight, our favorite segment where we talk about where we are on the AI bubble watch. Similar to the nuclear clock from the bulletin of atomic scientists. first up, Rahul, you have a article from Citadel securities.

a large hedge fund. Funny story, a recruiter associated with Citadel actually hit me up last week for a quant position with their REC team. Yeah, not qualified. Also have to commute to Boston. That seems like it be hard.

Rahul Yadav (55:22)

Pretty big.

Dan (55:30)

Interesting.

Maybe they're just impressed by your.

Rahul Yadav (55:36)

It'll be a bit of a long commute

from where you are.

Shimin (55:39)

Yeah.

Dan (55:39)

They were just

impressed by your analysis on two minutes. know, future quants

Shimin (55:43)

I hope so.

Rahul Yadav (55:45)

That'd be hilarious if they're like, Shimin, we really like your takes on two minutes. You know what's going on.

Dan (55:47)

That would be qual, I think, not going.

Remember, friends, this is not investment advice.

Rahul Yadav (55:54)

Hehehehehe

Dan (55:56)

It's just us

poking holes at the AI bubble the only way we can, which is through the lens of the atomic clock pioneered in the 1950s by the Bureau of Atomic Scientists who were trying to measure how close we were to Armageddon. But in this case, we're measuring how close we may or may not be to the AI bubble bursting. I had to put that in there.

Rahul Yadav (55:59)

Yeah.

Shimin (56:16)

Although I think

we also got much closer to Atomic Armageddon this past weekend as well, so...

Dan (56:21)

Why not both?

geez. All right, Rahul, kick us off.

Rahul Yadav (56:24)

Yeah,

this article, you everybody's trying to get their take on where the world would go by one of the most awesome names, honestly, that I read recently by Frank Flight at Citadel.

And some of the key things that they talk about is like the AI technology itself has the recursive self-improvement curve, right? We're already seeing that the new models and all that is speeding up because they're using the models to build the next generation of models and everything. But we shouldn't take that, we shouldn't apply that thinking to how its adoption would go.

iteration can be recursive, but the adoption would still be some like it won't be exponential. There would still be like they say S curve is the best you can get. So like just the different things that we have, working in the real world.

physical restrictions, are regulatory stuff you have to get through, organizational constraints, all of those things are real and regardless of how fast you can improve the model, its adoption cannot match that speed. So we should keep the two separate. Other things that they call out are, as we build better models and everything,

we're going to have the cost of these is going to go up. So at some point, human labor might be cheaper in some cases than the cost of that like model. And in those cases, it might not make sense to actually use the model. And then some of the obvious ones that we've talked about in the past, know, like, when you get productivity gains, you actually don't see job loss, you actually see even more.

consumption and everything which leads to more jobs and all that. said we'll have a John Maynard Cane said that we will have 15 hour work weeks by now and here we all are. 996 is the is the new hot thing so Keynes got that one wrong.

Shimin (58:13)

Yeah. He said

15 hours work weeks and we heard 50 and you know, it just happens.

Rahul Yadav (58:17)

Yeah

Dan (58:18)

Maybe he meant 15 hour work weeks per day.

Rahul Yadav (58:21)

Now, what is 996? It's 72 hours is what you're almost looking two weeks worth working one week. And then the final like the couple of sentences that they close the this whole article on was released about to me.

Shimin (58:23)

You

Rahul Yadav (58:37)

We have our population is aging, climate change is a real threat, deglobalization is happening. So all of these things put a downward pressure on potential growth. And so they're saying AI might be the all the promises we're assuming we get from AI. It might just be enough to offset these downward trends. So there is a world where it just nets out that, you know,

Shimin (58:48)

Mm-hmm.

Rahul Yadav (59:04)

AI keeps us going on the current trajectory, nothing much because there's so much other downer pressure from different things.

Shimin (59:11)

Yeah, that's an interesting take that I've not considered before.

Dan (59:13)

Maybe AI will recommend that we fix climate change.

Shimin (59:15)

Let's ask Claude that after the show.

Dan (59:17)

Claude,

should we fix climate change? I don't know.

Shimin (59:20)

okay. Then, your brother...

Rahul Yadav (59:21)

I wanna see what Grok

says.

Dan (59:24)

Yeah,

that would be an interesting comparison.

Rahul Yadav (59:27)

It's going to work in our Department of War now, so I think we should start asking it some serious questions.

Dan (59:34)

Yeah. So, uh, this, the little piece that I'm bringing up here is, uh, sub stack posts that, um, I don't know if you all have read or not, but, uh, it was a pretty interesting. And so this article in the guardian that I submitted actually just kind of like summarizes the sub stack post, but, um, the, it shook the entire

like stock market when that post came out. So that's kind of interesting. But the thing that was fascinating about that post is the sort of TLDR version of it is they're basically saying like, what if AI works like too well and there's a white collar job ⁓ crisis and that winds up essentially creating, I mean, we've, we've talked about the term like ghost economy.

Shimin (1:00:19)

Mm-hmm.

Dan (1:00:25)

before in here, but like it winds up only attributing value to ghost economy, the actual economy tanks. And that freaked people out a little bit. And the entire S&P dropped 1%, which they're attributing to that.

that post specifically because some of the companies that he mentioned in the sort theoretical future space were explicitly the ones to drop.

Shimin (1:00:49)

Yeah, it's interesting that like American Express dropped because white collar workers will no longer be able to buy anything. That's the kind of second order effects that I did not expect. Yeah. From a sub stack post.

Dan (1:00:56)

Ha ha ha.

Yeah, and then the other one that was pretty good was the

that I didn't expect.

Rahul Yadav (1:01:09)

Well,

while Dan pulls it out, I want to plug a book in. There's an older book called When Experts Speak and one of the things they talk about is experts who go on, you know, news and everything give their commentary. One of the classic examples you see of experts in stock and like the stock market stuff.

One day you would see stock market tanks because XYZ happened. And then if it doesn't tank or doesn't go down the next day, you can literally take the same thing. go stock markets holding despite XYZ happening. And they're like, either way there, it's just a continuous news mill cycle. so you should kind of like look more into these things. So I, yeah.

Shimin (1:01:49)

I see that so frequently

on CNBC. It is like almost comical. I just use it as a ticker at this point because the banners don't mean anything.

Rahul Yadav (1:01:55)

Yeah.

Yeah, so if these stocks hadn't tanked, you could have still come up with the headline, stock market doesn't respond to this article at all. Good then.

Shimin (1:02:07)

Yeah, exactly. Or the stock

market tank due to Fed rate pressure for a million different reasons, right? Like gas has gone up, oil has gone up.

Rahul Yadav (1:02:13)

⁓

Yeah

Yeah.

Dan (1:02:20)

The, maybe I'm hallucinating this myself as a human and didn't actually wind up in here. was just like furiously skimming covering for me. And like, I guess they didn't mention it, but the thing that like I've been least thinking about in the same context is that, the way that people will pay for things could dynamically, like drastically change in the near future, right? Because like you've got concepts such as like brand loyalty that we have today.

Shimin (1:02:28)

You

Dan (1:02:46)

Right. Where you might either because you always go to brand X because that's sort of like your personal ritual or, you know, you've just like appreciate the way they do business or whatever. Like all that stuff goes out the window when you're basically like Claude, buy me a sandwich. You know, Claude will find the most efficient sandwich to buy you and then it will arrive at your doorstep for the cheapest possible price. And like that's going to have a

Shimin (1:02:47)

Mm-hmm.

Mm-hmm.

Dan (1:03:12)

pretty drastic transformative effect on the economy too, because like everything about how a whole bunch of things work is gonna go straight out the window. which I thought they'd covered in this, but I guess not. whatever, I guess people can hallucinate too.

Shimin (1:03:13)

Mm.

Yeah, maybe I would, you when we all lose our jobs, we can get a unemployment discount for, for Claude. Claude, remember I was asking about unemployment insurance not a long ago. Like my sandwich should be cheaper.

Dan (1:03:29)

Claudia.

Claude,

I always said thank you at the end of my prompts.

Shimin (1:03:38)

Give me a nice guy discount. Have you guys tried that? Nice guy discount. It's a-

Dan (1:03:40)

Yeah.

Rahul Yadav (1:03:42)

Is that a thing? Why doesn't I-

No one offers me that ever. I don't know.

Shimin (1:03:47)

But the concept

is like, you ask for it. You're like, hey, by the way, can I get a nice guy discount on this thing? I've never tried it, but it's something I've always wanted to try.

Dan (1:03:56)

Seems like

something that should be granted

Rahul Yadav (1:03:57)

You probably won't get it so...

Shimin (1:03:59)

my two minutes,

Yeah. Block shares went up 24 % as company slash almost 40, 45 % of the workforce last week. yeah. Now, according to Jack Dorsey, the layoffs are because of AI and AI related productivity gains. I'm not saying I believe it, but what does this

Dan (1:04:06)

Ugh.

That one was just painful to read.

AI washing is definitely

a thing.

Shimin (1:04:26)

Right. But

me of is when Elon did a massive run of layoffs at Twitter or X, the artist formerly known as Twitter, what two, three years ago now. And the market rewarded block for this, which probably will, you know, as a second order will lead to other folks believing that AI is effective at replacing workers.

which would probably be an indicator that folks think AI works and therefore lead to more AI investment.

I'm not saying it doesn't work. I'm saying regardless of whether or not it works, folks are going to take it to be that AI is a massive productivity gain and therefore should the market will see it that way. Yeah.

Dan (1:04:55)

Are you saying it doesn't work? We're sitting here talking about it every day.

Right, like the, yeah, in the same way that Elon made it okay

to do deep cuts for Tech. So everybody did deep cuts for Tech, yeah.

Shimin (1:05:16)

Yep. Yep. It's a permission structure.

Rahul Yadav (1:05:20)

My favorite part of this is going back to the LinkedIn shirt is the third paragraph B2B SaaS is less than half a percent of US GDP. It's simply not an important industry relative to anything macroeconomic. I felt hurt about that. was just like, all of us, it was just like.

Dan (1:05:38)

Got me right in the career.

Rahul Yadav (1:05:40)

Yeah,

exactly. All you B2B SaaS guys, no one cares. Eli is a great, he has great taste, smart guy. I like his takes on.

Shimin (1:05:55)

I thought you were gonna say Eli is a great hater, which is probably also true.

Dan (1:06:02)

So.

Rahul Yadav (1:06:02)

He thinks as far as I can tell he thinks things through

Dan (1:06:06)

So speaking of thinking things through, where does all this all over the map dart throwing of news get us in terms of two minutes this week?

Rahul Yadav (1:06:06)

we call it?

Shimin (1:06:15)

Great segue.

We were at two minutes and 15 seconds.

Anyone got a number they want to throw out there?

Dan (1:06:20)

Well, here's a hot take. I want to move it further back, but not because of any of the articles that we pulled for this. Purely because of the Pentagon Open AI contract. Because I think that if Open AI is able to do a little bit better with that money, then was that an existing contract or no? I don't think so, right? Yeah.

Rahul Yadav (1:06:41)

New. Anthropic,

Shimin (1:06:41)

new contract.

Rahul Yadav (1:06:43)

it seemed like, was the only one in the government through Palantir. So this would be new.

Dan (1:06:45)

Yeah, then that may prop

them up a little bit. in my mind, for better or for worse, they're still the pin that pops the bubble, at least as far as frontier companies.

Rahul Yadav (1:06:55)

No.

Shimin (1:06:57)

That's fair. My thought was we don't know if the government is actually designating Anthropic as a supply chain risk. Yeah.

Dan (1:07:04)

Supply chain risk. They're still using it. I heard that the

Iran strikes were planned using anthropics though.

Rahul Yadav (1:07:08)

you yeah you can't

Shimin (1:07:09)

Right.

Rahul Yadav (1:07:10)

rip it out it's thoroughly embedded in palatir systems so

Shimin (1:07:14)

Yeah.

Dan (1:07:14)

Yeah.

Shimin (1:07:15)

If they did, I will move us much, much closer because Anthropic is also very much tangled with AWS and I think, I to say Google. So it.

Rahul Yadav (1:07:22)

Yeah.

Yeah and folks this

is why you should use open router or google or vertex so that you can easily switch your models in case it's not doing what you want it to do.

Dan (1:07:34)

You

Shimin (1:07:38)

But

I agree with you, I think we can move it back. that no news is good news, although a lot of news did happen.

Dan (1:07:48)

Yeah. And I guess like all arguably you could look at the block news is like, well, maybe now we are seeing economic impact so that IE is working and if it is working then less bubble risk. I don't know. Again, this is not financial advice. I'm just shooting from the hip that you can't see on my web cam or here on the podcast.

Shimin (1:07:59)

All right. Yep.

Rahul Yadav (1:08:04)

You're moving to...

You said three minutes?

Dan (1:08:09)

We don't have to go back three, but like back a little bit.

Rahul Yadav (1:08:12)

Okay. Three, three random podcast guys on one tiny corner of the internet feel more optimistic about the future despite, ⁓ dooming reports and block layoffs. Yeah. Stocks do not care.

Dan (1:08:12)

Shimin (1:08:12)

All right.

Yeah, we do.

Dan (1:08:22)

played all of the signal to the contrary every day.

Shimin (1:08:24)

Yeah, despite AWS going down due to a missile strike, yeah, we feel better about AI at least. Okay, let's

do...

Dan (1:08:32)

It is gonna

take our jobs. We did it, guys.

Shimin (1:08:35)

Let's do two minutes and 45 seconds. We're feeling better about the prospects of AI taking our jobs. OK, well, let's go with two minutes and 45 seconds then. And with that, that's the show. Thank you for joining us for another conversation this week. If you like the show, if you learned something new, please share the show with a friend. You can also leave us a review on Apple Podcasts or Spotify. It helps people to discover the show, and we really appreciate it.

Rahul Yadav (1:08:37)

Yeah

Shimin (1:09:00)