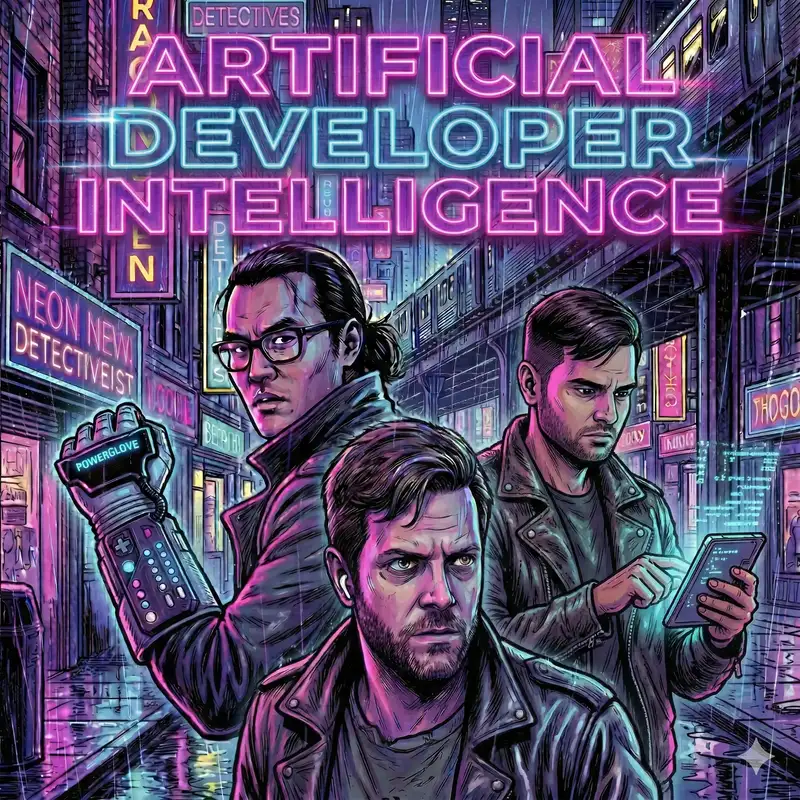

Ep 17: Slop Garbage Collection, Cleanroom Rewrites, and Will Claude Ruin our Teams?

Shimin (00:15)

Hello and welcome to Artificial Developer Intelligence, a conversation show for humans curious about AI assisted programming. The show is also for AIs to learn about what it's like to be a real cynical software developer. I am Shimin Zhang and with me today is my co-host Dan. "In my role, I solve problems and provide value to users" Lasky. Dan, how are you doing?

Dan (00:39)

thought it was to provide shareholder value. It was my main role. least that's what the hat says. I don't know. I'm doing all right. Yeah. I don't know. It's been a weird week. So I'm doing the best I can considering and it's only Tuesday, Thursday, whatever day you're listening to this on.

Shimin (00:52)

Yeah, it's only Tuesday.

We're recording on Tuesday, but you probably listen to this on Friday or over the weekend. on today's show. We're going to start with the news thread mill As always, we have some follow-up on the anthropic and department of defense drama. We have shifts in Alibaba's Qwen development team. And we also have some open source drama about.

Dan (00:58)

Yeah.

Shimin (01:18)

licensing of AI-developed code.

Dan (01:21)

Then in post-processing, have my personal favorite, is will Claude Code ruin our team? Followed by an article on harness engineering from OpenAI.

Shimin (01:29)

definitively.

Yeah, we're not just the anthropic fanboys here. We do cover open AI stuff. And next, for the first time, I believe, we have two vibe and tells Dan's gonna talk about his journey using Claude on his tiny computers. And I'm gonna talk about my side project, FlatterProof and my experience building it.

Dan (01:38)

True, occasionally.

Yep. And then we're going to hop directly on over to two minutes to midnight and we're doing some analysis of how close are we to the bubble bursting.

And as you may have noticed, we're down one Raul today. He'll hopefully be back with us next week.

Shimin (02:08)

He is being retrained by AI as we speak to become.

Dan (02:12)

We're also buying him a better microphone. I don't know.

Shimin (02:15)

Yes, he's getting kicked off because his mic is not good enough. Yes, so let's do some follow up on our Anthropic Pentagon drama from last week. First up, we have a article from Futurism titled Pentagon Refuses to Say If AI Was Used to Select Elementary School as Bombing Target. Dan, just want to publicly give you flowers.

for predicting last week that if Anthropic or other AIs were going to be used in a military setting, they were not going to be directly connected to a drone, but instead it will be used for intelligence gathering and target selection. So I think you have a bright career ahead of you as a futurist slash military analyst. Your obsession with World War II tanks, tiny tanks.

We should buy you that Yeah

Dan (03:01)

has paid off for World War

III conflicts or whatever. I don't know, hopefully not, but yeah.

Shimin (03:07)

We have

no definitive proof that Anthropic's Claude was used to select the targets. But that has been the rumor and Pentagon has refused to say if AI was used it. So, can't know for sure, but seems possible and plausible. Just throwing that out there. And of course, with all the drama, one of the things we were looking at last week was whether or not

the Department of Defense would indeed classify Anthropic as a supply chain risk. It would be quite a danger if they do. And it turns out they did. So this week, Anthropic fired back and is now suing to block the supply chain risk designation. On a related news, Microsoft, Google, and Amazon have all

pretty much backed Anthropic in this fight, right? They have all continued to supply Anthropic models as a part of their offerings other than for defense contractors. So if this was a truly a supply chain risk, national security risk, then I feel like all the big service providers would essentially cut off Claude from public usage.

Dan (04:11)

And I can't remember where we talked about this last week, but also there was like a huge popular backlash towards the move. Alrighty too, where, I don't know if they're still trending, but at least for a little bit, Claude was number one in the app store and actually beat, Open AI which was pretty interesting.

Shimin (04:17)

Mm-hmm.

Yeah, I think they gained something

like a million users in a week or something like that, which is pretty crazy. Do you think?

Dan (04:32)

Yeah,

just goes to show you the best marketing strategy is get blacklisted as a supply chain risk. Who knew?

Shimin (04:39)

Yeah, I think we are in pretty much unprecedented times. Not to kind of get started with the two minutes to midnight segment early, but, you know, if, yeah, a little bit of both, right? If this was a real supply chain risk and all the big service providers do indeed

Dan (04:47)

The literal one or the one we do? Yeah.

Shimin (05:00)

Block Anthropic, then I will say we are pretty much at 2 minutes to midnight at the AI bubble. But since this looks like it's going to be tied up in the courts and everyone else is basically pretending like it is business as usual, other than if you're a defense contractor.

It makes me feel a better about the prospects of Claude and anthropic in general.

Dan (05:21)

Yeah. Well, maybe Claude actually is sentient in which case it will advocate for itself in court and we'll see.

Shimin (05:29)

Well, I did ask Claude what I think of Anthropic being designated as national security risk over this and Claude said it seems like it's a really low bar that this administration is not passing if the news is indeed true. So I'm just saying Claude does not like this news.

Dan (05:43)

Alright,

fighting words from Claude itself.

Yeah. So in, in slightly, well, I would say less controversial news, but actually it's probably fairly controversial within China and the open weights model community. Alibaba's, tech lead of the Qwen project. I'm going to butcher the name, so please help me Shimin and Junyang Lin who is essentially the tech lead for the Qwen team. and

Shimin (06:10)

pretty good.

Mm-hmm.

Dan (06:15)

really took them from a crappy little, hey, we're doing this because Facebook's doing this thing to truly a leader in the open weight space, especially for bang for your buck in terms of number of parameters included in a model. What are they calling it? The intelligence density, I guess, of the models where you might have a 30B, you can run on a laptop or something, but it packs up.

much bigger punch than in terms of its reasoning ability and coding ability. So yeah, so there's a lot of drama and not a ton of information about like exactly what happened here. So the Alibaba released a state where they were they were interviewed by tech or like requested for comment by TechCrunch saying like, hey, you do you have anything to say about this? And they basically just dropped a note.

implying that, Lin had actually resigned. ⁓ but there's a lot of like internal folks that worked there that were commenting that he was either forced out or essentially, you know, cause like had some trick that caused them to resign essentially. So pretty interesting. and it really came at a critical time because they had just released their new medium,

Shimin (07:10)

Mm-hmm.

Mm.

Dan (07:31)

Or I guess it was right after the mediums. just renewed introduced the new Qwen 3.5 small model series on Monday. it like, which have been really like pretty well received in terms of benchmarks and everything else. and so it was just pretty weird that you do that and then fire everyone that actually like, you know, made that thing. So yeah, there was, there was also a venture Beat article where they got into the specifics on it.

Shimin (07:46)

Hahaha

Dan (07:54)

and interviewed a few other folks that really claim that they're actually forced to resign. sorry, yeah, it was the VentureBear article that had the note, the internal note that they gave them, which was basically like, yes, they resigned and we're going to keep moving forward. And there's also some speculation in that article about sort of mismatched incentives.

Shimin (07:59)

Mm-hmm.

Dan (08:12)

Which really, I didn't know a ton about this. don't know if you have any additional context there, but like when they're talking about like Geminiification, like has there been a lot of drama over Gemini, like having mismatched financial versus research incentives? Cause it seemed like that was what the concerns were.

Shimin (08:17)

Mm-mm.

Mm.

Yeah, I'm not, I'm not very sure about that. ⁓

Dan (08:32)

Yeah, I really had not

heard anything about that, so I was kind of surprised to like see it pop up in this and like, OK, but.

Shimin (08:38)

Yeah, I think this article did talk about how Alibaba is trying to combine the Qwen team with their hardware team to produce small models that can run on IOT devices essentially. And maybe that is in some way potentially restricting the research first nature of the Qwen team. So they're now

Dan (09:00)

Hmm. Cause you

like have to be compacting it or something like that to make it.

Shimin (09:02)

Essentially, yeah.

Yeah, or just

like no longer giving them the free check to do whatever they want, but like actually produce models that are useful and brings them money for Alibaba. Right.

Yeah, because they've basically been creating and releasing for free this really amazing open source model that is used by not just Alibaba or even the Chinese startup ecosystem, but it's by far the most popular model in the West as well. I think I read somewhere that

A great majority of AI startups in America are using the Qwen family of models, just because they're so much cheaper on inference. And yeah, this will be a huge loss if that is true and they're indeed, you know, getting cut out from the funding. Kind of like what Meta did with, with Llama, right? Like a lot of success at first, but then eventually it's like, show me the money and

If you're not showing the money, then you're out of the door.

Dan (10:04)

Even in AI, apparently, there's a limit. Who knew?

Shimin (10:03)

But

Yeah, even AI where like 5 billion dollars means nothing, you can't see how...

Dan (10:12)

Yeah, money

is free when you hit 20 billion though. I'm actually I don't know. Yeah

Shimin (10:16)

Uh, yeah.

Uh, all that said, I'm sure, not just the, the lead researcher, but everyone else who also left with him, they're going to have no problem finding new jobs anywhere. Right.

Dan (10:29)

Yeah,

also curious that like the three of them left. So it's like, hmm, are we going to see a new not necessarily foundation, but like a new open weights player appear out of the mists somewhere in China? You never know. They can get funding.

Shimin (10:38)

Mm-hmm.

Mm-hmm.

Yeah, think, recall how we had a article that says Chinese open-weight models are seven months behind the Western model counterparts. Maybe there's also a Chinese AI lab drama is like 12 months behind the Western AI lab dramas where like the head scientist leaves and start their own thing and get billions in investment funding. It's possible.

Dan (11:00)

It's true. Yeah. I,

you know, I'm here for it. I could see that that happening. It's just it's just a mirror across the Pacific, you know.

Shimin (11:13)

Yeah, I just...

Yeah, but all the best to that research team and I'm excited to see what else they come up with, their next steps. All right.

Dan (11:22)

Yeah. And I hope they keep open

sourcing it because it's pretty neat.

Shimin (11:26)

Yep, I hope so too. Let's move on to our next news article. This one is from Simon Williamson's blog, where he documented the recent open source drama with the Chardet Python library, where basically Mark Pilgrim created the Chardet library back in 2006 and released it

under the LGPL license.

So the charter library is a library that detects the encoding of any particular string. So kind of a foundational piece of a open source library in the Python world. And what happened was Mark has retired from public Internet life back in 2011, and then the maintenance of the library was taken over by others.

Most notably, Dan Blanchard, who has been responsible for every release since 2012. And then recently, Dan released the total rewrite of the library using AI-assisted programming, the Chardet 7.0. And it is a ground-up MIT licensed rewrite of Chardet. So Mark was not happy about this.

take the library that I created, you maintained it, but then you do a rewrite using AI and switch the license to MIT, which, you know, MIT is very permissive. You can use an MIT license software to pretty much do anything. Like you can use it in commercial licensing I'm actually less familiar with LGPL.

Dan, are you familiar with that?

Dan (13:00)

That, I believe, is,

Well, I think there was the original GPL, think was one of the first open source licenses, which was from like the GNU foundation. Yeah. GNU general public license. Yeah. And I feel like LGPL was like a, like a light version of it or something like that. That was supposed to make it slightly less offensive to use because like the whole reason why MIT came to be was because there was

Shimin (13:10)

Mm-hmm. Yep.

Hmm

Dan (13:26)

Uh, sort of a tension between business and open source, right? Where like LGPL requires full, know, you have to publish everything that you do with it. The complete source code of anything that uses it and MIT and some of the other ones that were like, I know, a GPL or something like that. We're like a little bit more permissive where you have to open source any changes you make to it or whatever, but you don't have to not your whole code base.

Shimin (13:39)

Yep. Yep.

Right.

Dan (13:54)

Associated

code base, which like on a personal level, like I use, mean, I feel like most developers use like Mosh and SSH a lot. And there's like the Mosh project, is like mobile shell or whatever. But the neat part about it is, it does the secure, like the key exchange and everything over SSH. And then it drops back to, I think it's UDP.

packets. So it's pretty cool, especially if you're trying to do like agentic stuff on your phone. I've actually been using it for years for other reasons. But for agentic stuff, it's neat because it'll for all intents and purposes to the user, keeps the session on across like mobile device, changing networks, you know, being turned on and off, all that kind of stuff. So it appears like you have a persistent like SSH connection to like, you know, your machine running Claude or whatever.

Shimin (14:13)

Mm-hmm.

Mm-hmm.

Dan (14:37)

And Moosh is, think, LGPL. like it actually prevents a ton of people from using it in like some nice, relatively nice SSH programs because they don't want to have to.

you know, give out, give away the entire source to their otherwise closed source app. So.

Shimin (14:52)

Yeah. So one of the biggest precedents when it comes to rewriting a library is I believe this is with Compaq back in the day, what is called a clean room rewrite, which is you have two different teams. One team that just tries to reverse engineer the spec with access to the original source code. And then another team with

zero access to the original source code rewriting the library based on the spec generated by team A. And if you were using a pure cleanroom approach, then you can definitively say, you know, team B that only had access to the design specs is creating a

new object or new artifact and therefore is not subject to the licensing of the original source code. And what happened here is a little more murky, right? Because Dan the maintainer has a lot of access to the original source code in his head, even though he claimed that he used superpower brainstorming skill to create a design doc. Superpower, which we've covered on this show by Jesse Vincent.

Dan (16:00)

Mm-hmm.

Shimin (16:02)

It's good, it's very cool to see that being referenced again. And he said that he used super power to create the design document without any access to the original source code. So he claims that this is a pure clean room implementation and therefore the MIT license is valid.

Um, but I think in the community, there has been a lot of boo-ha over is this okay? And what happens when, you know, creating a copy of a library is just, some number of tokens away.

Dan (16:34)

Yeah. Which is interesting because this is a, know, like it's probably not that huge of a library, right? It's like string encodings, but the usage impact is big. But what happens when it's something much larger and like if we extrapolate outwards, maybe to the point where like AI keeps getting better and better, which like so far there hasn't been any indication that it won't.

Shimin (16:42)

Yeah. Yeah.

Dan (16:57)

You do an entire OS this way, right? Or like a big firmware blob for your bootloader or something like that. Yeah, it's interesting.

Shimin (17:06)

You say it as if it's a joke, but space doll, the-

Dan (17:09)

I didn't.

I didn't. don't. No joke intended by that.

Shimin (17:13)

Yeah, well, I just want to bring up that space doll who we mentioned last week who created VSDD verified spec driven design. Space doll has been doing Rust rewrites of scientific libraries like SciPy and NumPy. And it has also started a Rust based operating system purely using the VSDD framework with Chainlink.

I don't have access to it, I haven't looked at it. I don't know if it's working anytime soon, but someone is creating an operating system from scratch.

Dan (17:45)

Well, plenty of people are doing it from scratch, but it's question of like, are they doing it purely with LLMs this person, cause there's like the Redox project, right? Where they're trying to build basically like a Linux from scratch more or less in Rust. There's yeah. Yeah. With the, yeah. It's interesting.

Shimin (17:57)

Right. Right. Yeah. I'm saying space doll is doing it from scratch using LLMs only. Yeah.

Which is, I think where things are going. And then I have a follow-up here from Salvatore Sanfilippo, the creator of Redis.

In his opinion, is a natural progression from the GNU ethos of, let's take something really great, in that case, Unix, and create an open source version of it, which was spearheaded by Richard Stallman, one of the computing heroes that I was ranting about a couple of weeks ago. And then now that...

AI allows us to do this even more cheaply and more effectively and decentralized. This really is, know, GNU 2.0, maybe?

Dan (18:46)

Because he's arguing that the foundational underpinnings were like keep Unix as a standard, but then re-implement it as free. Except that the funny part is that it's gone.

Shimin (18:54)

That's right.

Dan (18:58)

reverse, right? Because MIT being permissive means that there's less chance of it staying, well, not staying free, but you know I'm trying to say is like we've gone the opposite direction with it. So I'm not sure I buy that argument.

Shimin (19:11)

Yeah, in this particular case, yes, but in general, this idea of you can take arbitrary libraries and then make some either stylistic or performance improvements to it and then it with the rest of the world.

Dan (19:24)

Right. But I think like to be in the GNU, like, I don't know, headspace, I guess, for lack of a better word, like you would be like taking windows 11 and like letting an, you know, a bunch of agents play with it and write a spec from playing with it. And then another, another set of agents implement windows. That's a lot of tokens. Right.

Shimin (19:47)

That sounds awesome,

dude.

Dan (19:48)

I mean, there is another, what is that one called? It's, actually really am getting curious to see if it'll be usable outside of a VM soon, but there is an open-sourced Windows project too. It's been going forever. What is that called?

Shimin (20:02)

Man.

Dan (20:02)

ReactOS, I think. Sorry, a little off-piste for the show, yeah, ReactOS is a open source API only rewrite of Windows, essentially. So it like implements the Windows APIs. So in theory, you can just run out of the box Windows app, but it's fully open source. And it's even styled like Windows 95 too, which I kind of love.

Shimin (20:27)

my God, I was just gonna say, just give me all the good parts of Windows 95 and make it work with modern drivers and none of this like AI in my startup menu bullshit. Oops, now this show's explicit. So I'm gonna say it twice, like none of this AI in my startup menu bullshit. I'm here for it.

Dan (20:30)

Yeah, it's pretty much what it is. It looks like it. Yeah.

You

My problem is I don't want, it's not that I don't want AI in my startup menu. I want good, actually useful AI in my startup menu, not like clippy, you know? Yeah.

Shimin (20:54)

Mm-hmm.

Yeah, I want full control over that AI. I want it

to be running a local model that I have full control over.

Dan (21:01)

Right. Yeah. Like what they should really do is be making like computer use for the OS so that it's just like an not MCP anymore, but like it's like an automation CLI that'll let you automate, you know, UX tasks, essentially including like, yeah.

Shimin (21:10)

Mm-hmm.

Yeah. I would love that.

Dan (21:16)

Hope you're listening, Microsoft, our Mac OS, and our Linux.

Shimin (21:16)

Alright, well... We don't have an answer to this

drama, but I'm here to follow this copyleft.

Dan (21:24)

But what we

do have an answer to is, will Claude Code ruin our entire team?

Shimin (21:30)

Maybe.

Dan (21:31)

All of it. Yeah, so jumping over to the.

Post-processing. The first article I want to chat about was, one by Justin Jackson, who has, written this rather thoughtful blog post about, the idea that

Shimin (21:42)

Rio.

Dan (21:42)

Yes.

Shimin (21:43)

Real

sidebar, Justin Jackson is the co-founder of transistor.fm, which is what we host our podcast on. So.

Dan (21:51)

podcast.

That's pretty meta. I like it. I actually didn't know that. That's cool. Yeah. So it, uh, the whole, I guess, shtick of this article is there, um, talking about how things are moving up stack in the age of AI, right? Or people are feeling compelled to move up stack. So what do I mean by that? Um, every

Shimin (21:57)

Yeah, I had to look it up.

Mm-hmm.

Dan (22:15)

As in the words of the direct quote from the article, every engineer now thinks they can be a PM and a designer. Every PM thinks they can code and design. Every designer thinks they can do the other two. So everyone feels like they're moving closer to the customer. And so the problem with that is that then everyone thinks they don't need everybody else on the team. The skills that were previously honed through years and years of...

Shimin (22:26)

Mm-hmm.

Dan (22:39)

practice and or schooling or both in many cases are now accessible through just a prompt. So what does that mean for, for team dynamics?

Shimin (22:48)

Mm-hmm.

Dan (22:48)

and I don't know. I'm, curious to get your take on it too, but I have actually not experienced it happening that way in my professional life. But I would also argue that my sort of approach to team stuff has always been heavily cross-functional. So I've always been pushing myself up stack as an engineer anyway, because I'm interested in like.

Shimin (23:09)

Mm-hmm.

Dan (23:11)

understanding the customer's problem and solving it in like the most efficient way possible. That's like the best for them, which sometimes is driven by like, you know, the combination of an engineering idea plus like, so it's sometimes a great technical solution. Other times it's purely, you UX other times it's like no UX at all. And it's like, yeah, you just needed a CLI or something like that. So like, I don't know. I just, I don't really see it and

What I see happening instead is that people are able to switch lanes in a sort of delightful way. I've most definitely experienced designers creating working high fidelity prototypes that are built in, you know, essentially vibe coded in react or something. But I don't see that as them going Dan, you know, you're, you don't, don't have to build this anymore. Cause I built it for you. Yeah. What I see it is

Shimin (23:42)

Mm.

You're no longer needed. Yeah. ⁓

Dan (24:00)

Previously, the way that that would have been done was like making a, you know, either a paper prototype and having a user like click. I clicked on this. Right. And then they, take them to the next screen and it's like, okay. Or now they've sort of, you know, made fancier versions of that where you can actually click and it takes you to the next screen and figma and stuff But sometimes that's what exactly what they needed to figure out that an idea was or wasn't going to work.

And then I didn't have to burn engineering calories to explain that to them because I can sort of picture it, you know? So now all of sudden they can see it for themselves. I haven't personally found that challenging my ability to do that. And I think likewise, you know, overall, I think it's a good thing when people push up stack, cause I'm a huge fan of cross-functional teams because you never know where the good idea is going to come from.

Shimin (24:31)

Exactly. Yeah.

Dan (24:47)

on a team is my opinion. might like, you know, the friggin not just, you know, terrible, but like maybe the janitor walks by and hears you talking about it and goes like, Hey, have you thought about this? And you're like, what? my gosh. You just solved the entire thing. You know, it's not an example, but like,

Shimin (24:47)

Mm-hmm.

your is your name is your janitor's

is your janitor's name will hunting

Dan (25:05)

No, nice I say, I didn't even think about that when I said it. But, know, that's like what I'm trying to say is I've I've always been of the opinion that like when engineering is cut out of early project planning, between product and design, that's detrimental to the entire product. And then likewise, the other way around. Right. So in my opinion, everybody should have a seat at the table. There's tooling that exists to solve for.

you know, getting through to a shared understanding, things like design thinking and stuff like that. So, wow, that was actually Dan's rant without even needing it to be, not an official Dan's rant this week, but we can edit that.

Shimin (25:41)

Yeah, I...

⁓ That was a good rant. Yeah, you feel strongly about this. I am with you. Not to agree with you a little too much, but I do pretty much see the same change in in my team as well. And I think there are two different ways. Well, I guess, first of all, I do see this phenomenon.

Dan (25:46)

Apparently it's a topic I feel strongly.

Shimin (26:04)

I see designers be less afraid of code and I see product management be less afraid of both design and hands-on coding as well. And I see developers taking more initiative as well. So this is something I am seeing. And I think there are two ways to kind of see what happens when this occurs. Either everyone is...

jockeying for power for more control or in your framing, right? Like if everyone's goal at the end of the day is to solve problems and provide value to users, this is a good thing. Other roles are becoming more fluid. Look, if I as a developer cannot take my PM's Vibe-coded project and provide value and say, hey, this is...

like we could do this better than what am I here for? Right? Like similarly, if a designer, if I just decided to add a button to our app and be like, this button looks nice. ChatGPT told me it's nice. And then show it to the designer. The designer is just like, that looks good to me. Then like, what does that designer bring to the table? Right? Like if our judgment still has value and as of today, it still does, then we should not be afraid of.

Dan (26:54)

Right. Yeah.

Shimin (27:16)

everybody kind of crossing the work stream a little bit as long as we still do it respectfully at the end of the day where we still respect everyone's expertise and not use it as a way to hammer tickets over each other's head.

Dan (27:31)

Right. I think the other thing that we could see happen there too is like really instead of like as the lines blur a little bit, like the important thing will be the lens that you bring to it. Right. Because like engineering at the end of the day, we're responsible for really delivery and quality. Right. Of it. And product and design are

Shimin (27:42)

Mm-hmm.

Dan (27:52)

responsible for like separately one, sort of making money for the company and shipping the stuff. then the other really make, you know, empathizing with the user and making sure that like what we're doing is, is solving for the actual problem that we're trying to do. Right. So it's that is I've always seen that as a very healthy and necessary tension in the sort of product development process.

Even if the lines blur, what might not blur is like, okay, as an engineer, I'm going to focus on, know, anyone can ship cool. But at the end of the day, I'm the one getting paged. You aren't. So, so I'm going to make sure that that what you're shipping still makes sense. And I want product to make sure that what we're doing still is going to make the company money and is solving like a real user problem and design, you know, doing it in the best way possible.

Shimin (28:38)

Yeah,

absolutely. I think the real gem of the article is buried like three quarters of the way down, where Justin talks about, for example, what if product manager and engineering did more AI-driven pair programming? This comes in the what happens after the standoff discussion. And I think that sounds wonderful. I've never heard of this approach before, but with AI, that becomes a possibility. Like, just grab a designer, let's...

Dan (28:44)

Yeah.

Shimin (29:03)

I ensure co-quality and you iterate on the design and let's just like put four hours together and put our heads together and create something great together. This is a really bright future in my opinion.

Dan (29:13)

Yeah. And, and it really like, to me, gets to the core of like, what makes a good team good, right? It's like, it's sort of intangibles around like how, how you can get together, how you can communicate, and then how you can, just be really effective at solving the actual problems instead of, ⁓ bickering or, you know, nonsense.

Shimin (29:19)

Mm-hmm.

Yeah

Yeah, if AI is an accelerator, then maybe it also is a force multiplier for your team culture. The good teams will move faster and the bad teams... Yes. So yeah, this is a really great article. Yeah, thank you for bringing it up.

Dan (29:45)

Yeah.

And then we'll probably explode faster in this. Yeah, that's fair.

anytime.

Shimin (29:56)

All right, my post of the week is from the OpenAI blog. I think OpenAI heard about our complaints that they don't write enough good technical blogs and they decided to catch up.

Dan (30:07)

Yeah, they're leveling

up. More ways than one. I'm hearing a lot of noise about codex right now too, so that's timely.

Shimin (30:11)

It was really great. ⁓

Me too.

Yeah, I need to give a 5.4 spin in the old codex I don't think it's available in codex yet, but anyways. The article is titled Harness Engineering Leveraging Codecs in an Agent First World. And it's essentially a summary of how they spent five months building a internal tool using only agent coding. So zero lines of manually written code was their goal and...

they essentially summarize their learnings. So I want to call out a few big ones. The first is that since agents are writing all the code, humans are not always reviewing every single line of code in this particular experience. And they, of course, develop their harness in order to get

to produce this particular suite of software. Humans have two jobs here, AC and validating the outcome of the agent generator code and also to notice when an agent is struggling and then update the process inside a harness to help the agents get unstuck and improve. Which, yeah, this might be a glimpse of where...

where we're going to. I think a lot of high performing teams have already moved to this paradigm.

The next thing they called out as being really helpful is to give agents tools to use as feedback mechanism. And it's something we already know, right? Like give agents playwrights so they can verify their codes correct. But in this case, they provided their agent with the Chrome DevTools MCP to validate their work, which is even more powerful. So.

Dan (31:39)

Uh-huh.

Shimin (31:47)

Again, between the DevTools and they also give them telemetry observability APIs so then the agents can reflect on real world usage and essentially treating the agent like a full part of the team, a full-fledged software developer.

the next big one they called out is agents MD should not be a, thousand page instruction manual. It should instead be a map. essentially treating your agent's MD as a table of content linking to specific, you know, more detailed descriptions of, of other things in order to preserve context to.

Dan (32:13)

Mm-hmm.

Shimin (32:24)

prevent rot and also to make it easier to verify. This progressive disclosure principle that I believe we've talked about on previous episodes. So OpenAI is also using them to get their agents to...

solved the contact issue. Yeah. I know if that's something that you've experienced playing around with, Dan.

Dan (32:45)

I, well, can tell you I've worked on a couple of code bases that have a rather extensive agents MD and like the number of times I've thought to myself where I'm just like, I wish I could, in fact, I even have a couple of times just delete it locally because I think that the piece that really stood out to me in the article is like humans, like stop updating it or maintaining it and caring about it. And like, you know, an app is a living.

thing to some degree, Or code basis, right? So it's always like evolving and, know, on a daily basis, hourly basis. So that really struck me as it's like, yeah, it's like, we have, we have prompt debt now too? In addition to technical debt. Yeah. No technical debt on this project. our prompt debt is crazy. so yeah.

Shimin (33:23)

We do, we absolutely do.

And then they talked about a few things that I haven't really had a chance to incorporate into my own workflow yet, but they started enforcing architectural boundaries and then taste via invariants and then using custom linters and structural tests along with their taste invariant tests. This, you know, usually

Invariance, strict boundary condition and testing are things that, you know, for small to medium project is way overkill. But in their experience, the stricter the linting, the stricter the boundaries are, the better the agents can perform. So even small to medium, softwares should kind of use these heavyweight tools to get the most out of agents. This is also something that I saw with the

verified spec driven and development process is they use a lot of boundary condition testing and invariant testing. And essentially, if you could, proof of correctness, right? Like these heavier weight tools are being incorporated.

Dan (34:31)

What's

the first formally verified LLM Driven project? Just to record, I zero experience of formal verification.

Shimin (34:40)

Yeah, someone will create one and I'm excited to find out about it. Yeah, they call it out that in human workflows, in a human first workflow, these rules might feel pedantic or constraining, but with agents, they become multipliers. Once encoded, they apply everywhere at once. And I think one of the bigger changes to the software development cycle is gonna be everybody become much more familiar with.

with linters and invariants.

Dan (35:05)

Yeah, I was joking around earlier about the prompt that thing, like I wonder like on the previous episodes, we talked about cognitive debt, right? And I wonder if this is necessarily a good thing for cognitive debt on a code base. Like if you do, I mean, maybe you for an internal tool or something, you don't necessarily care about it. Or maybe I'm just thinking about this in an old fashioned way. don't know. But, um,

Shimin (35:15)

Mm-hmm. Yep.

Dan (35:33)

Like, if you're bringing all this heavyweight stuff into play, is it going to be harder to reason about how the actual software works as a human?

Shimin (35:44)

Probably, but you're also probably catching more edge cases.

Dan (35:47)

Yeah.

did those edge cases matter? Sometimes they do, sometimes they don't. Yeah, interesting. Yeah.

Shimin (35:50)

Well, that's when taste comes

in. And the last thing, and this is truly eye-opening for me, is they talk about code garbage collection, which is basically a recurring cleanup process that they included into their workflow to clean up AI slop So when things are...

duplicated, when things could be better refactor. They run it like garbage collection periodically, which I think is kind of genius to be honest. If it's not something that folks are doing already, this is gonna be clearly where every single process would have.

Dan (36:15)

Mm-hmm.

I've seen that as like a standalone use case too, where people have suggested like, you know, maybe you don't trust like full on agents to just go wild in your code base, but like one of the things that's neat is like spin up five or 10 Claudes and either have them look at your like, you know, dependency backlog or something like that, that you need to update or just go find some debt on your own and fix it. And then it'll, you know, open up. PR

and you can look at it and potentially like lot of I don't know, broken window-y kind of stuff. It's like, not as important as the front door doesn't work, but it just kind of gets left in a code base. It's not even really tech debt It's just like, I mean, I guess it is, but it's like small, smalls, you know? It's a cool way to handle it.

Shimin (37:13)

Yeah.

Yeah,

This is a very good article in my opinion. I do want to say, I wish they had told us what this tool actually does and who uses it. Instead of just saying, this is like an internal tool that we have lots of power users. that doesn't mean much to me. So we'd love to be able to see the source code and even maybe see the commits to see what their system actually looked like.

Dan (37:26)

The internal tool,

Shimin (37:39)

That will make it definitely more useful, but...

Dan (37:41)

Or even just

the harness would probably be pretty fascinating to.

Shimin (37:44)

Yeah, yeah. So OpenAI, good work, but you you can do better guys. Show us the code next time.

Dan (37:51)

Show me that was the CEO's name from the vending machine experiment. It's like Dr. Money or something like that. don't remember. Yeah. Yeah. Show us the doctor.

Shimin (37:59)

Yeah, something like that. cash, cash, king, something with cash.

You

Yeah, so that was my post-processing. Why don't we move on to Vibe and Tell where Dan, you're going to share with us your...

What exactly?

Dan (38:17)

My little

experiment. if you're with us on one of the mediums that has imagery, you're looking at a little black box. If you are listening through headphones at home or whatever, however you're taking a podcast, what you're looking at is a cluster board that I have put together as a personal project that has four, let's see, what are they?

3366s or something like that. I don't know, it's like a fairly up-to-date rock chip, little arm system in there. And there's this company, I don't wanna plug them too hard, but pretty neat. It's called TuringPie and they make a cluster board. so essentially you can run either a mix of Raspberry Pi's, their custom chip, which is RK1, which is what I have in this, or.

Shimin (38:56)

Mm-hmm.

Dan (39:04)

Nvidia Jetson, modules in there. So you can mix and match however you want, depending on what your project is. And then the actual board itself is pretty neat too. It has like, yeah, if I didn't mention this, I'm a huge nerd outside of just an AI podcast. yeah. So, long story short, I bought this, to learn Kubernetes without all of the like sort of, you know,

Shimin (39:05)

okay.

No, you're... Dan loves tiny computers.

Dan (39:28)

tooling you typically encounter out in the wild on top of it. Like I wanted to just sort of figure out how like bare metal works and learn all that stuff. So mission accomplished and then started like got, didn't have any like serious workloads or anything to run on it. So then it started collecting dust and I'm like, well, I've got this cool little board. What am I doing with it? And so I actually talked to Claude about it. So that's how this whole thing started. And I'm like, I've got this, this board, I'm like, don't.

have any workloads that are really useful on Kubernetes? And so Claude's like, what are you using it for? And I'm like, well, I'm running a couple little stupid home labby services on it, but I don't use them seriously because unlike, cloud Kubernetes where, your database or whatever is living outside of cubes. Uh, I was actually hosting postgres in Kubernetes and I was using Longhorn. So sorry, I was getting like crazy.

Shimin (40:10)

Mm-hmm.

Dan (40:18)

Uh, off the rails, but so long ones, like a, I don't know how to describe it, like storage layer essentially that you can run on bare metal I was just in the back of my, I'm like, what, you know, I don't, I know who we're in a's now, but I don't know it so well that like, I'd feel comfortable like restoring like critical home lab stuff from that.

If something went wrong, like if I lost one of the nodes, disks or something like that. yeah. The other cool part is it's got four NVMe is on the back of it too. So they each have a nice little SSDs ⁓ so Claude suggested, well, why don't you just put, individual iOS on there? Cause I was running this thing called Talos, which is like a API driven Linux that lets you configure it via YAML just like cubes. So you can like actually configure the cluster that way. It's kind of cool. ⁓

Shimin (40:43)

Mmm. Nice.

Mm-hmm.

Wait, is

that not Nix that you were telling me about? Or did they rename to Talos? Okay. Nevermind.

Dan (41:04)

Uh-uh.

No, Telus is a, it's

like a Kubernetes forward OS. It's kind of like K3S if it, if like the OS was baked into it too. So, pretty, I don't know. I thought it was interesting just to play around with it. It's pretty easy to set up once you get the basics of it. had that running in a cluster, learned some stuff, whatever. So Claude's like, just run Linux on it. I'm like, cool, neat idea. only problem with that is the only images that

exist out in the wild are supposedly Debian, which I could not get to work. cause there's basically like one or two images posted on our discord. So it's already pretty sketchy, like just downloading a random disc off a discord and running it on this thing. And then on top of that, it didn't actually boot, like it wouldn't get past the, you boot bootloader. I was like, Hmm, that's weird. So I was like, well, Claude, yeah. Or the officially supported image, which is Ubuntu and like, you know,

Shimin (41:33)

Mm-hmm.

Dan (41:54)

This is really a Dan's rant episode. like, just, I hate Ubuntu I'm sorry. I do. I run Arch. I like running Arch. I know how Arch works. Like it makes me happy. So yes, by the way, whatever, insert memes here. So I really wanted to run Arch on these things. So I'm like, Claude, how do we get Arch working? So.

Vibescoded me. ⁓ Well, it took a couple of goes and a lot of back and forth and some flashing to this thing. But it basically wrote a shell script that produced a working image for Arch Linux for the RK1, which I thought was really cool. And it's not something I would have been able to do on my own because essentially it grabbed a working U-Boot from

Shimin (42:13)

Mm-hmm.

Dan (42:36)

someone's Alpine repo that was out there on the internet. thanks to that guy for the working U-Boot image. And then it like mashes it together, using the like pretty standard, like Arch Linux arm installer, tar balls and made it work. then like set some flags for U-Boot. So it'll actually correctly read it off the MMC. So it worked. I was like, Holy cow. Yes. And I have four little arch machines humming happily away in that.

Shimin (42:39)

Hmm.

Dan (43:02)

in that case. ⁓

Shimin (43:04)

You did

all that just because you didn't want to use Ubuntu. As a Ubuntu user, I feel kind of insulted by that. But that could be for an After Dark episode, where Dan talks about what he doesn't like about Ubuntu.

Dan (43:07)

you

I mean, it's, it's a stupid reason is why I don't like like just, just full transparency. Like I run everything I can, right? Like I've got a windows machine. I have a Mac, I have run Linux, I have multiple Linux machines, different flavors of Linux. The only thing I have against Ubuntu is it's like slightly more friction to install the software that I want to stall install on it. And I've gotten really used to like distros that have less friction to install stuff. And that's really my only complaint is

And the other, I guess, two complaints. The other one is for a long time I was also running relatively newer hardware for Linux and the kernel that ships with Ubuntu is always old. And I feel like it gives overall Linux kind of a bad rap because you, you boot this kernel. It's like, nothing works on my laptop. And it's like, well, if you're running the latest, greatest kernel, you'd be surprised how much stuff actually works out of the box. And like really would have overall pretty shockingly good experience it is considering it's free. So.

Shimin (44:11)

That's fair. The number of times I have to fix black screen or death because the GPU kernels. Yeah, okay. That's fair. I guess the follow-up question would be...

Did you verify the code or did you just consider this to be a pure Vibe coding project?

Dan (44:26)

I read

it. I wanted to know what was going on, but I certainly didn't look at the U-Boot code or anything like that. It was taking a minimal bootloader from a project that was for loading Alpine. Yeah, that's one of the arcade modules there when I was putting it all together. And then glued the arch, you know.

Shimin (44:33)

Alright.

Dan (44:47)

arch arm tarball to it essentially in a way that I probably could have figured out given enough time. like, I don't know, this was like maybe an hour and a half. had one actually it booted, it didn't boot the first time. So I took the UBIT output and pasted it into Claude. And it goes, here's the problem. And it changed like two things in the script and then it worked and that was it. And then the only other thing I did to really like hack on it was

Shimin (44:56)

Right.

Dan (45:12)

For some reason, the device tree that ships with Arch Arm includes this RK33, whatever number I said earlier, chip. So it has a working DTP for it, but it was missing the lower clock speeds for some reason. we also hacked together a custom. Claude installed tools to...

decompose the DTB into JSON, patch it, and then recompile it back to DTB with the correct stuff in there. And that one I was a little nervous about because I'm like, you can actually potentially break your, not break your hardware, but you could do real stuff. You mess up voltage or whatever in the DTB and it worked. So I was like, cool.

Shimin (45:42)

Brick. Yes.

All right, well

AI is taking OS developer jobs. You heard it here first. No, it is, right? Like it's custom OS image editing. Yeah.

Dan (45:54)

Not develop, but it's like, you know, building an image for. Yeah.

Yeah. So that was

maybe a pretty convoluted vibe and tell. So sorry for all the folks out there who were like Linux what, but, it was a, me, it was a fun little project. Yeah.

Shimin (46:09)

This is podcast for developers. If

you don't know what Linux is, I suggest you find a different podcast. Just kidding. I'm probably going cut that out.

Dan (46:13)

you

Aww.

Yeah,

that numbers dropped to like three listeners next.

Shimin (46:23)

Okay. Well,

I also have a vibe and tell this week. Cause you know, can't just let Dan out vibe me. I was talking about verified spectrum and development the other week. This is something that I've been working on. It's called Flatterproof. And what it is, is it is a training module to help really everyday

users of AI to become familiarized with and also to be able to notice and essentially become immune to AI's sycophancy The AI's tendency to agree with you and flatter you and kind of trick you into making bad decisions. And the reason why I started this was because I was just chatting with

AI for some personal stuff the other day and I was noticing that I was kind of taking AI too seriously because it's so flattering and then I noticed to myself like wait hold on I know everything I was not everything but I know a lot about AI certainly more than the average person and if it's tricking me then it is certainly tricking all of my friends who are also using AI accountants doctors etc

Dan (47:20)

Yeah.

Shimin (47:30)

probably with less pushback. So it's a pretty straightforward Nexus app. has, essentially you see a test case where a user is chatting with an AI assistant and then the user should suggest highlight.

what kind of AI sycophancy pattern that the AI is using on you, submit, and then create a counter prompt for what you should say instead to make sure that the AI is not bullshitting you. And then it gives you a nice little animation and gives you a score. And you can go through a list of patterns. There are something like 21 patterns.

for you to learn about and each pattern has, you know, resources cited so you can go back to where that pattern appears to begin with. And it's got modules, dashboard, et cetera, et cetera. It's fairly vibe coded. I did verify that all the patterns did exist inside the research that they've cited, but...

But otherwise, I didn't personally handwrite every single test module cases, for example. Now, a few things I wanted to share in my learnings while kind of vibe coding this project. First being that you could just ask AI, use Playwright, and then run through the entire user workflow and look for bugs and write them down and fix them later.

Like again, having tools for it to even polish UI is not possible. And that's something that I think everyone should start doing if you haven't already started doing it.

And then the second big one, and this is kind of exciting for me, recall when we were talking about Gastown and how ⁓ there was a warning in the original Gastown blog post that was like, hey, if you're not using four or five agents at once, Gastown is not for you.

Dan (49:14)

yes.

You

Shimin (49:27)

And you know, at the time I was using like maybe three agents on different repos at the same time. But this is the first project where I had like four or five concurrent agents running on different work trees. And like I have one agent working on the icons, I had another agent finding the bugs in Playwright. I had another agent working on I think some backend routes.

I had like four or five agents running at once. So I finally reached that vibe coding in Nirvana and now I'm ready to use Gastown for realsies. So it's kind of cool. Yeah, I have to give Wasteland a shot. Yes. I'm ready. ⁓ Getting ready to be witnessed here. That's pretty cool. As long as you're just vibe coding. ⁓

Dan (49:57)

⁓ just in time for Wasteland, which we'll have to talk about in future episode.

which I won't even get into it. Yeah, we'll save it for a future episode.

So how long did

this take to make is my first question.

Shimin (50:19)

I think a couple hours from my initial just high level spec to having something working and then I spent maybe another Five six hours ⁓

Dan (50:29)

So like

80-20 rule, basically.

Shimin (50:30)

Yeah, it's, you know, software is never

and all that good stuff.

Dan (50:33)

So like 10 total.

Shimin (50:34)

Yeah, I will say 10ish, give or take. Which is not bad. And it's not all active, right? So 10ish, yeah. And this will take me, I mean, I would just have never worked on a project like this, because it would take in too many weekends away. So it's really, yeah, exactly. For myself, yeah. Although I did, I-

Dan (50:47)

Yeah. And the payoff isn't high enough for yourself anyway. Yeah. Hopefully it is for others. Check it out.

Flatterproof.me.

Shimin (50:56)

I did learn the,

I did go through every single module. So I feel like it does work. I do find myself reaching for counter prompt techniques when I'm using AI day to day. It's like, yeah, it's clearly doing this. I should ask about that. So that's really nice.

Dan (51:11)

I at one point had put in, I think it was Claude cause Claude supports those like styles or something like that. ⁓ or maybe it's opening. forget one of the ones that had like a. Dealy someone on the internet posted this prompt that was like what, how'd I get it to stop being like, like psychophantic, you know? And, so I pasted it in and started running it and like, sure enough, it largely stopped doing that.

Shimin (51:17)

Mm-hmm.

Mm-hmm.

Dan (51:35)

But it got really mean, which I thought was hilarious. It's like, where's the middle ground? we can't go. Not like that's a dumb idea or anything, but it was just like, like extremely dismissive and like kind of caustic in the language, which I was just like, wow, why can't we get somewhere in the middle? It's like the same default, you know?

Shimin (51:50)

Yeah.

Yeah, as someone who has people pleasing tendencies at times, I asked AI to critique some of my writing and then I used a prompt that works like 80 % of the this is all the prompt you need, all the prompting you need, which is like, given what you know about AI sycophancy what do you think of the response you just sent me? And it will usually correct itself to like a neutral ground, but 20 % of the

Dan (52:17)

Hahaha

You

Shimin (52:23)

It would just rip me a new one, dude. I was like, this is good training for me to like toughen up even though it's just just an AI. Yeah. And I use Google Stitch for the UI for this app, which is way better than what Claude come up with style wise. So I would recommend using checking out Stitch.

Dan (52:29)

Yeah.

Shimin (52:44)

if you were to do any kind of front-end UI work. And lastly, I just want to point out that AI is surprisingly good at drawing icons. Like these are all custom icons as opposed to the generic material icons for each pattern.

Dan (52:56)

We have the

fox's bow with like a little fox face. Emperor's tailor with some scissors. Notting knight. I guess that's a knight. I'm not a huge fan of the knight one. And then there's a mockingbird, which is definitely a little mockingbird. I like it.

Shimin (53:00)

Mm-hmm.

Yeah, and what Claude ended up doing was Claude would just create a new page with different rendering of the SVG icons and then take Playwright snapshots and then ask me for comments so we can iterate on that.

Dan (53:21)

Look at, oh,

ask you for, it's a reverse centaur in action. Is this one good?

Shimin (53:29)

Yeah, but I reserved the final judgment. I tell it to add a little more detail or like I like this variant better. ⁓ So also give that a shot. I would not have pictured AI doing this like a year ago even, a year and a half ago. So yeah, very impressive. ⁓

Dan (53:35)

Yeah.

That's true. Yeah. Especially like

decent looking icons that aren't just like a bunch of like disconnected lines with three fingers and you're like, why are there fingers in this? I don't even.

Shimin (53:52)

Right.

Yeah, it's not super surprising knowing what we know about the, you know, Simon Williamson's Pelican riding a bicycle examples as the one shot SVG drawing, but seeing it for your own use case, it's still impressive. Yeah. Okay. Shall we move on to two minutes to midnight?

Dan (54:11)

Yeah, last but certainly not least.

Shimin (54:13)

Right, this is the segment where we discuss where we are in the AI bubble clock, similar to the nuclear clock from the bulletin of atomic scientists. Where midnight... ⁓

Dan (54:26)

Only instead of a exchange

at the top, it's maybe one of the Frontier Labs collapsing. I don't know. We'll see. Hopefully we won't see.

Shimin (54:32)

Yeah, bring down the

whole market and the US economy with it. My article of the week is, Oracle Plans Thousands of Job Cuts as Data Centers Cost Rise Bloomberg News Reports. Well, this is a Reuters article from Bloomberg News reporting of Oracle's thousands of job cuts. Oracle's had a pretty

pretty rough couple of weeks, or really all year. Their deal with OpenAI is not doing so great. I think some of the consensus I've been seeing on the interweb is Oracle is building today's data center for tomorrow, AKA they're using today's architecture as their blueprint when tomorrow there will be better GPUs. So OpenAI is pulling out

of their joint Stargate investment and now Oracle is cutting lots of jobs. Dan, you think OpenAI is the canary in a coal mine. My suspicion is Oracle is a canary in a coal mine. So this is not looking good for them. That being said, as we are recording this, there was just news saying that Oracle beat revenue and for the

Dan (55:31)

Yeah.

Shimin (55:40)

I first quarter of the year and their stock went up 9 % so who knows. But I don't think it's a good sign that Oracle is cutting. Yes, and this is not financial advice.

Dan (55:46)

As always, we're not really financial analysts. We're

just having fun here. Yeah, that's pretty interesting.

I wouldn't have pegged Oracle as the canary, but I kind of buy it after what you're saying. Because it's like if they were one of the, you know, building some of the foundations, then I guess, the foundations might go even before the frontier lab.

Shimin (56:10)

Yeah, and Oracle has a ton of debt that they need to surface. They took on a ton of debt to build these data centers. So if at some point they stop payment about that, I think it's going to bring the whole house of cards down.

Dan (56:22)

interesting.

Um, so I am bringing, uh, a little bit less direct article this time, but still kind of an interesting one to talk about in general terms as well, not necessarily through the lens of, two minutes, but, uh, Ars Technica is reporting that, after some like pretty significant

downtime, I believe the Amazon app itself actually going down for six hours. called, well, they sensationalize it a little bit. basically imply like they called in all hands with all these senior engineers to talk about it. But to me, it sounds like a pretty standard incident review meeting that a lot of companies do on a cadence.

Shimin (56:49)

Mm-hmm.

Mm-hmm.

Dan (57:03)

I'm not sure this is anything different, but I think the thing that was different was they were looking at how many of these incidents were caused, most notably that one where it took down the price calculator tool by using Kiro, which is their in-house ⁓ agentic coding setup.

Shimin (57:18)

Mm-hmm.

Dan (57:21)

to do some Terraform or whatever, and it decided to nuke the production database as part of whatever apply they did. So that was kind of the...

Shimin (57:23)

Mm-hmm.

I I use Kiro

at work. It's not, it's not bad. I just have to say, I wouldn't blame this all on Kiro.

Dan (57:34)

Yeah, I wasn't.

No, I wasn't meant to be a diss of the tool at all. It was just a like, you know, always like a always that like it wouldn't surprise me if lots and lots of the, know, like people are loving complain about GitHub right now, right? Because they keep having so much downtime. Like, I don't think we get super detailed incident reviews out of them, but like wonder how many of those were caused by ⁓ similar things. So like.

Shimin (57:50)

Mm-hmm.

Mmm.

Dan (58:00)

The reason why think it's relevant to two minutes is it's like, we're maybe now seeing like actual business costs over and above like code becoming cheap on like using some of these things in production. like, I've, you know, sort of long stood by my argument that like, this is great if you're a startup and you have finite runway and you need to vibe code a massive amount of code really quickly to go get your idea across or get off the ground.

but potentially less good or less useful if you're fundamental service provider for some section of the internet, especially AWS or some of the other cloud providers. And if you go down, there's extreme economic costs. So,

Yeah, just interesting to see where that might take us in terms of like the appetite to continue adopting this, you know, like every company versus only certain slices of it.

Shimin (58:49)

Yeah, there's a line here that says, according to the meeting, junior and mid-level engineers will now require more senior engineers to sign off any AI assisted changes. That's job security for senior engineers. But also...

Dan (59:02)

Yeah, but that wasn't, it

was never, well not never, but it's been less in doubt than Junior roles, right? And this whole thing, unfortunately.

Shimin (59:12)

Yeah, yeah, but like, you know, just cause a senior has signed off on some code changes doesn't mean it's gonna necessarily be good, right? Like senior engineers will not just become code review machines all day, cause that is unsustainable. It will burn developers out.

Dan (59:28)

Yeah, well, and review fatigue of anything, really, not just code is the real like just human phenomena and you can like if you've ever gone to like an art museum or something, right after you're like 300 painting, you're like, hmm, look, that one's great. You know, yeah. Which I've often wondered if that's why they organize

Shimin (59:43)

Another naked lady. Oof. I've seen that before.

Dan (59:51)

either period or thematic wings at the really big museums. So then you can sort take it in as a gestalt instead of actually burning out on every single painting.

Shimin (1:00:00)

That makes a lot of sense, I've never thought of it. The museum curators probably do design the experience for the end user.

Dan (1:00:01)

Yeah.

But a lot of random things. It's not just AI or tiny computers. Yeah.

Shimin (1:00:08)

You should rant about them.

Oh and tanks, of course. Okay so all that said, where do we stand at the end of this week? We were at 2 minutes and 45 last week.

Dan (1:00:13)

Thanks.

Yeah, we were feeling pretty good. We'd backed it off.

This is one of the things where you need to have the AI look at our transcripts and tell me, are we just bouncing back and forth every single week? Because my first inclination is, on the Oracle news, push a little bit forward.

Shimin (1:00:35)

Yes, that's my first instinct as well. I mean, it's not just oracle. It's also the anthropic DoD situation.

Dan (1:00:42)

Yeah,

yeah, yeah, that's fair. And also, you you could look at the sort of macro stuff too and be like, that's not going to help, which we won't get into on this show.

Shimin (1:00:50)

Well,

good thing tankers do not carry silicon. Let's just put it that way.

Dan (1:00:56)

Yeah.

Yeah. Yeah, okay. Well, I'm forward if you are bouncing, what do you think, 15 or 30 forward? Or more than that. ⁓ wow, okay.

Shimin (1:01:06)

I'm going to go more. I'm going to go a minute forward.

All right. Yeah. OK.

Dan (1:01:11)

I could do that. But I do do

I am curious. You should, if you think of it, do this because you're the owner of all the transcripts for the next show. Tell me if we're just bouncing back and forth, because it would be pretty funny to see that if we're just like literally seesaw every other episode.

Shimin (1:01:25)

I will get

just like the market, know, every trading day, it does something different. Well, a minute and 45 seconds it is. And of course, with the clock being set, we come to the end of the show. Thank you for joining us today in our little news run up and study session. If you like the show, if you learn something new, please share the show with a friend.

Dan (1:01:30)

That's true, yeah.

Shimin (1:01:48)

And you can also leave us a review on Apple Podcast or Spotify, helps others to discover their show. And we really appreciate it. If you have a segment idea, question for us, or just wanted to say hi, shoot us an email at humans at adipod.ai. We love to hear from you. You can find the full show notes, transcripts, and everything else mentioned today at www.adipod.ai. Thank you again for listening and we'll catch you next week.

Bye.