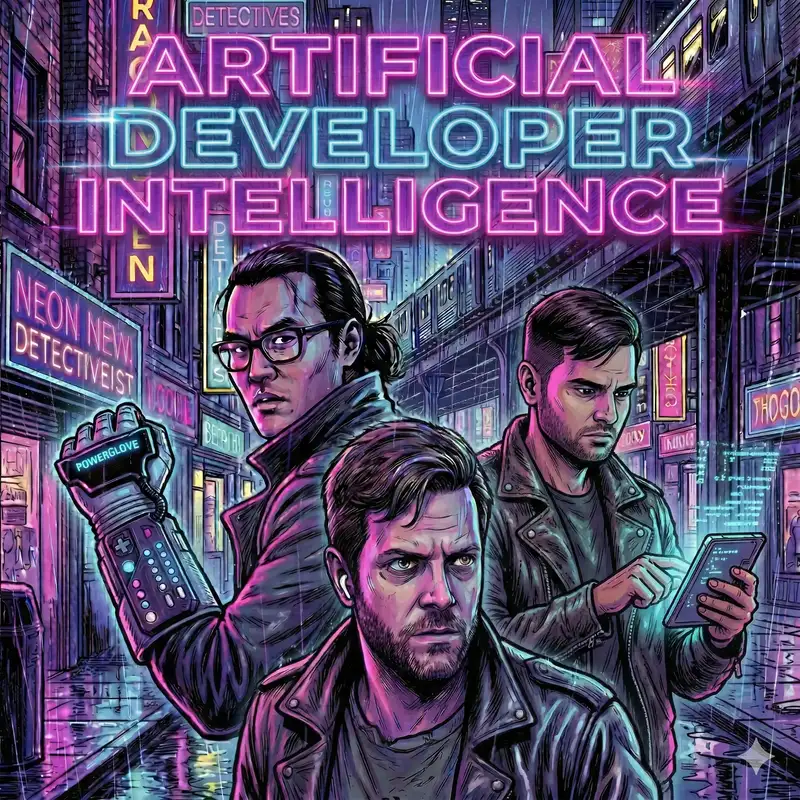

Ep 19: Thinking Fast Slow and Artificial, Meta's Trouble with Rogue Agents, and FOMO in the Age of AI

This week, Rahul, Shimin, and Dan covers Claude Code's new channels and scheduling features, a Meta security incident caused by AI-generated advice, Anthropic's survey of 81,000 people on AI expectations, Dan's vibe-coded vector memory CLI project, a deep dive on the paper "Thinking, Fast, Slow and Artificial" about cognitive surrender to AI, a rant about AI tokens as employee compensation, and bubble watch updates including NVIDIA's trillion-dollar demand projections and OpenAI shutting down Sora.

Takeaways:

Takeaways:

- Claude Code is rapidly absorbing community-developed workflows — the moat may only be in the general model capabilities, not tooling

- The Meta incident illustrates the emerging pattern of AI-caused production incidents and the need for process guardrails around agent usage

- Cognitive surrender to AI creates a widening gap: those with high need-for-cognition benefit more while those who dislike effortful thinking defer even more

- AI confidence inflation (12 percentage point boost) may stem from treating AI like authoritative reference material (encyclopedias, Wikipedia)

- Historical technology resistance (Socrates on writing, farmers on tractors) suggests the battle against AI adoption may already be lost

- OpenAI shutting Sora just 4 months after a 3-year Disney partnership signals deeper financial or strategic issues

Resources Mentioned

Push events into a running session with channels

Perhaps not Boring Technology after all

Meta is having trouble with rogue AI agents

What 81,000 people want from AI

Dan's vec-memory-cli

Thinking—Fast, Slow, and Artificial

Are AI tokens the new signing bonus or just a cost of doing business?

Jensen Huang just put Nvidia’s Blackwell and Vera Rubin sales projections into the $1 trillion stratosphere

Accelerated FOMO in the Age of AI

OpenAI shutters AI video generator Sora in abrupt announcement

Chapters

Connect with ADIPod

Push events into a running session with channels

Perhaps not Boring Technology after all

Meta is having trouble with rogue AI agents

What 81,000 people want from AI

Dan's vec-memory-cli

Thinking—Fast, Slow, and Artificial

Are AI tokens the new signing bonus or just a cost of doing business?

Jensen Huang just put Nvidia’s Blackwell and Vera Rubin sales projections into the $1 trillion stratosphere

Accelerated FOMO in the Age of AI

OpenAI shutters AI video generator Sora in abrupt announcement

Chapters

Connect with ADIPod

- Email us at humans@adipod.ai you have any feedback, requests, or just want to say hello!

- Checkout our website www.adipod.ai